F5SPKEgress¶

Overview¶

This overview discusses the F5SPKEgress CR. For the full list of CRs, refer to the SPK CRs overview. The Service Proxy for Kubernetes (SPK) F5SPKEgress Custom Resource (CR) enables egress connectivity for internal Pods requiring access to external networks. The F5SPKEgress CR enables egress connectivity using either Source Network Address Translation (SNAT), or the DNS/NAT46 feature that supports communication between internal IPv4 Pods and external IPv6 hosts. The F5SPKEgress CR must also reference an F5SPKDnscache CR to provide high-performance DNS caching.

Note: The DNS/NAT46 feature does not rely on Kubernetes IPv4/IPv6 dual-stack added in v1.21.

Note: The DNS/NAT46 feature does not rely on Kubernetes IPv4/IPv6 dual-stack added in v1.21.

This overview describes simple scenarios for configuring egress traffic using SNAT and DNS/NAT46 with DNS caching.

CR modifications¶

Because the F5SPKEgress CR references a number of additional CRs, F5 recommends that you always delete and reapply the CR, rather than using oc apply to modify the running CR configuration.

Important: The pools of allocated DNS/NAT46 IP address subnets should remain unmodified for the life of the Controller and TMM Pod installation.

Important: The pools of allocated DNS/NAT46 IP address subnets should remain unmodified for the life of the Controller and TMM Pod installation.

Note: Each time you modify egress or DNS, the TMM has to redeploy.

Note: Each time you modify egress or DNS, the TMM has to redeploy.

Requirements¶

Ensure you have:

- Configured and installed an external and internal F5SPKVlan CR.

- DNS/NAT64 only: Installed the dSSM database Pods.

Egress SNAT¶

SNATs are used to modify the source IP address of egress packets leaving the cluster. When the Service Proxy Traffic Management Microkernel (TMM) receives an internal packet from an internal Pod, the external (egress) packet source IP address will translate using a configured SNAT IP address. Using the F5SPKEgress CR, you can apply SNAT IP addresses using either SNAT pools, or SNAT automap.

SNAT pools

SNAT pools are lists of routable IP addresses, used by Service Proxy TMM to translate the source IP address of egress packets. SNAT pools provide a greater number of available IP addresses, and offer more flexibility for defining the SNAT IP addresses used for translation. For more background information and to enable SNAT pools, review the F5SPKSnatpool CR guide.

SNAT automap

SNAT automap uses Service Proxy TMM’s external F5SPKVlan IP address as the source IP for egress packets. SNAT automap is easier to implement, and conserves IP address allocations. To use SNAT automap, leave the spec.egressSnatpool parameter undefined (default). Use the installation procedure below to enable egress connectivity using SNAT automap.

Note: In clusters with multiple SPK Controller instances, ensure the IP addresses defined in each F5SPKSnatpool CR do not overlap.

Note: In clusters with multiple SPK Controller instances, ensure the IP addresses defined in each F5SPKSnatpool CR do not overlap.

Parameters¶

The parameters used to configure Service Proxy TMM for SNAT automap:

| Parameter | Description |

|---|---|

spec.dualStackEnabled |

Enables creating both IPv4 and IPv6 wildcard virtual servers for egress connections: true or false (default). |

spec.egressSnatpool |

References an installed F5SPKsnatpool CR using the spec.name parameter, or applies SNAT automap when undefined (default). |

Installation¶

Use the following steps to configure the F5SPKEgress CR for SNAT automap, and verify the installation.

Copy the F5SPKEgress CR to a YAML file, and set the

namespaceparameter to the Controller’s Project:apiVersion: "k8s.f5net.com/v1" kind: F5SPKEgress metadata: name: egress-crd namespace: <project> spec: dualStackEnabled: <true|false> egressSnatpool: ""

In this example, the CR installs to the spk-ingress Project:

apiVersion: "k8s.f5net.com/v1" kind: F5SPKEgress metadata: name: egress-crd namespace: spk-ingress spec: dualStackEnabled: true egressSnatpool: ""

Install the F5SPKEgress CR:

oc apply -f <file name>

In this example, the CR file is named spk-egress-crd.yaml:

oc apply -f spk-egress-crd.yaml

Internal Pods can now connect to external resources using the external F5SPKVlan self IP address.

To verify traffic processing statistics, log in to the Debug Sidecar:

oc exec -it deploy/f5-tmm -c debug -n <project>

In this example, the debug sidecar is in the spk-ingress Project:

oc exec -it deploy/f5-tmm -c debug -n spk-ingress

Run the following tmctl command:

tmctl -d blade virtual_server_stat -s name,clientside.tot_conns

In this example, 3 IPv4 connections, and 2 IPv6 connections have been initiated by internal Pods:

name serverside.tot_conns ----------------- -------------------- egress-ipv6 2 egress-ipv4 3

DNS/NAT46¶

Overview¶

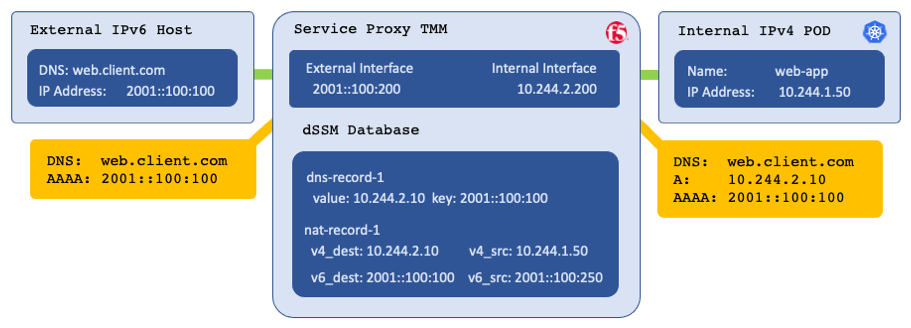

When the Service Proxy Traffic Management Microkernel (TMM) is configured for DNS/NAT46, it performs as both a domain name system (DNS) and network address translation (NAT) gateway, enabling connectivity between IPv4 and IPv6 hosts. Kubernetes DNS enables connectivity between Pods and Services by resolving their DNS requests. When Kubernetes DNS is unable to resolve a DNS request, it forwards the request to an external DNS server for resolution. When the Service Proxy TMM is positioned as a gateway for forwarded DNS requests, replies from external DNS servers are processed by TMM as follows:

- When the reply contains only a type A record, it returns unchanged.

- When the reply contains both type A and AAAA records, it returns unchanged.

- When the reply contains only a type AAAA record, TMM performs the following:

- Create a new type A database (DB) entry pointing to an internal IPv4 NAT address.

- Create a NAT mapping in the DB between the internal IPv4 NAT address, and the external IPv6 address in the response.

- Return the new type A record, and the original type AAAA record.

Internal Pods now connect to the internal IPv4 NAT address, and Service Proxy TMM translates the packet to the external IPv6 host, using a public IPv6 SNAT address. All TCP IPv4 and IPv6 traffic will now be properly translated, and flow through Service Proxy TMM.

Example DNS/NAT46 translation:

Parameters¶

The table below describes the F5SPKEgress CR spec parameters used to configure DNS/NAT46:

| Parameter | Description |

|---|---|

dnsNat46Enabled |

Enable or disable the DNS46/NAT46 feature: true or false (default). |

dnsNat46Ipv4Subnet |

The pool of private IPv4 addresses used to create DNS A records for the internal Pods. |

maxTmmReplicas |

The maximum number of TMM Pods installed in the Project. This number should equal to the number of Self IP addresses. |

maxReservedStaticIps |

The number of IP addresses to reserve from the dnsNat46Ipv4Subnet for manual DNS46 mappings. All non-reserved IP addresses are allocated to the TMM replicas. Use this formula to determine the number of non-reserved IP: (dnsNat46Ipv4Subnet – maxReservedStaticIps) % maxTmmReplicas. See the Reserving DNS46 IPs below. |

dualStackEnabled |

Creates an IPv6 wildcard virtual server for egress connections: true or false default. |

nat64Enabled |

Enables DNS64/NAT64 translations for egress connections: true or false (default). |

egressSnatpool |

Specifies an F5SPKsnatpool CR to reference using the spec.name parameter. SNAT automap is used when undefined (default). |

dnsNat46PoolIps |

A pool of IP addresses representing external DNS servers, or gateways to reach the DNS servers. |

dnsNat46SorryIp |

IP address for Oops Page if the NAT pool becomes exhausted. |

dnsCacheName |

Specifies the required F5SPKDnscache CR by concatenating the CR's metadata.namespace and metadata.name parameters with a hyphen (-) character. For example, dnsCacheName: dnscache-cr. |

dnsRateLimit |

Specifies the DNS request rate limit per second: 0 (disabled) to 4294967295. The default value is 0. |

debugLogEnabled |

Enables debug logging for DNS46 translations: true or false (default). |

The table below describes the F5SPKDnscache CR parameters used to configure DNS/NAT46:

Note: DNS responses remain cached for the duration of the DNS record TTL.

Note: DNS responses remain cached for the duration of the DNS record TTL.

| Parameter | Description |

|---|---|

metadata.name |

The name of the installed F5SPKDNScache CR. This will be referenced by an F5SPKEgress CR. |

metadata.namespace |

The Project name of the installed F5SPKDNScache CR. This will be referenced by an F5SPKEgress CR. |

spec.cacheType |

The DNS cache type: net-resolver is the only cache type supported. |

spec.netResolver.forwardZones |

Specifies a list of Domain Names and service ports that TMM will resolve and cache. |

spec.netResolver.forwardZones.forwardZone |

Specifies the Domain Name that TMM will resolve and cache. |

spec.netResolver.forwardZones.nameServers |

Specifies a list of IP address representing the external DNS server(s). |

spec.netResolver.forwardZones.nameServers.ipAddress |

Must be set to an IP address specified in the F5SPKEgress dnsNat46PoolIps parameter. |

spec.netResolver.forwardZones.nameServers.port |

The service port of the DNS server to query for DNS resolution. |

DNS gateway¶

For DNS/NAT46 to function properly, it is important to enable Intelligent CNI 2 (iCNI2) when installing the SPK Controller. With iCNI 2 enabled, internal Pods use the Service Proxy Traffic Management Microkernel (TMM) as their default gateway. It is important that Service Proxy TMM intercepts and process all internal DNS requests.

Upstream DNS¶

The F5SPKEgress dnsNat46PoolIps parameter, and the F5SPKDnscache nameServers.ipAddress paramter set the upstream DNS server that Service Proxy TMM uses to resolve DNS requests. This configuration enables you to define a non-reachable DNS server on the internal Pods, and have TMM perform DNS name resolution. For example, Pods can use resolver IP address 1.2.3.4 to request DNS resolution from Service Proxy TMM, which then proxies requests and responses from the configured upstream DNS server.

Reserving DNS46 IPs¶

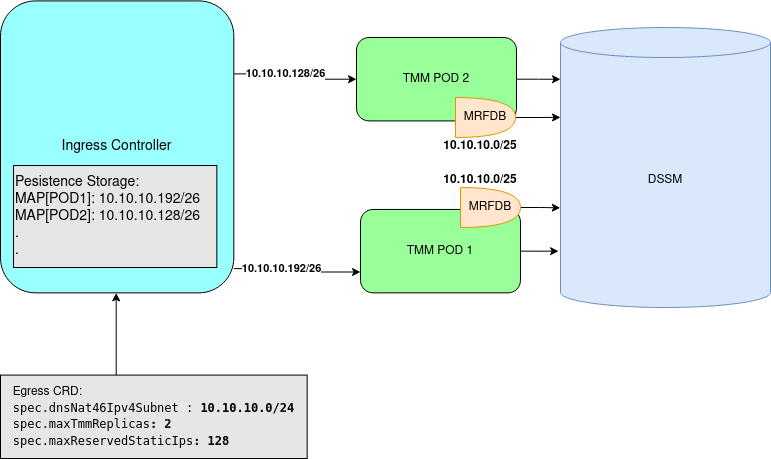

You can reserve DNS46 IP addresses for use when creating a Manual DNS46 entry in the dSSM database. This section demonstrates how the dnsNat46Ipv4Subnet, maxTmmReplicas, and maxReservedStaticIps parameters work together to allocate IP addresses.

dnsNat46Ipv4Subnet: "10.10.10.0/24”- Specifies 254 usable IP addresses.maxReservedStaticIps: 128- Specifies 128 reserved DNS46 IPs.maxTmmReplicas: 2- Allocates 64 addresses to 2 TMMs: TMM-2 receives 10.10.10.128/26, and TMM-1 receives 10.10.10.192/26.

IP Allocations:

Installation¶

The DNS46 installation requires a F5SPKDnscache CR, and requires the CR to be installed first. An optional F5SPKSnatpool CR can be installed next, followed by the F5SPKEgress CR. All CRs will install to the same project as the SPK Controller. Use the steps below to configure Service Proxy TMM for DNS46.

Copy one of the example F5SPKDnscache CRs below into a YAML file: Example 1 queries and caches all domains, while Example 2 queries and caches two specific domains:

Example 1:

apiVersion: "k8s.f5net.com/v1" kind: F5SPKDnscache metadata: name: dnscache-cr namespace: spk-ingress spec: cacheType: net-resolver netResolver: forwardZones: - forwardZone: . nameServers: - ipAddress: 10.20.2.216 port: 53

Example 2:

apiVersion: "k8s.f5net.com/v1" kind: F5SPKDnscache metadata: name: dnscache-cr namespace: spk-ingress spec: cacheType: net-resolver netResolver: forwardZones: - forwardZone: example.net nameServers: - ipAddress: 10.20.2.216 port: 53 - forwardZone: internal.org nameServers: - ipAddress: 10.20.2.216 port: 53

Install the F5SPKDnscache CR:

kubectl apply -f spk-dnscache-cr.yaml

f5spkdnscache.k8s.f5net.com/spk-egress-dnscache createdVerify the installation:

oc describe f5-spk-dnscache -n spk-ingress | sed '1,/Events:/d'

The command output will indicate the spk-controller has added/updated the CR:

Type Reason From Message ---- ------ ---- ------- Normal Added/Updated spk-controller F5SPKDnscache spk-ingress/spk-egress-dnscache was added/updated Normal Added/Updated spk-controller F5SPKDnscache spk-ingress/spk-egress-dnscache was added/updated

Copy the example F5SPKSnatpool CR to a text file:

In this example, up to two TMMs can translate egress packets, each using two IPv6 addresses:

apiVersion: "k8s.f5net.com/v1" kind: F5SPKSnatpool metadata: name: "spk-dns-snat" namespace: "spk-ingress" spec: name: "egress_snatpool" addressList: - - 2002::10:50:20:1 - 2002::10:50:20:2 - - 2002::10:50:20:3 - 2002::10:50:20:4

Install the F5SPKSnatpool CR:

oc apply -f egress-snatpool-cr.yaml

f5spksnatpool.k8s.f5net.com/spk-dns-snat createdVerify the installation:

oc describe f5-spk-snatpool -n spk-ingress | sed '1,/Events:/d'

The command output will indicate the spk-controller has added/updated the CR:

Type Reason From Message ---- ------ ---- ------- Normal Added/Updated spk-controller F5SPKSnatpool spk-ingress/spk-dns-snat was added/updated

Copy the example F5SPKEgress CR to a text file:

In this example, TMM will query the DNS server at 10.20.2.216 and create internal DNS A records for internal clients using the 10.40.100.0/25 subnet minus the number of

maxReservedStaticIps.apiVersion: "k8s.f5net.com/v1" kind: F5SPKEgress metadata: name: spk-egress-crd namespace: spk-ingress spec: egressSnatpool: egress_snatpool dnsNat46Enabled: true dnsNat46PoolIps: - "10.20.2.216" dnsNat46Ipv4Subnet: "10.40.100.0/25" maxTmmReplicas: 4 maxReservedStaticIps: 26 nat64Enabled: true dnsCacheName: "dnscache-cr" dnsRateLimit: 300

Install the F5SPKEgress CRD:

oc apply -f spk-dns-egress.yaml

f5spkegress.k8s.f5net.com/spk-egress-crd createdVerify the installation:

oc describe f5-spk-egress -n spk-ingress | sed '1,/Events:/d'

The command output will indicate the spk-controller has added/updated the CR:

Type Reason From Message ---- ------ ---- ------- Normal Added/Updated spk-controller F5SPKEgres spk-ingress/spk-egress-dns was added/updated

Internal IPv4 Pods requesting access to IPv6 hosts (via DNS queries), can now connect to external IPv6 hosts.

Verify connectivity¶

If you installed the TMM Debug Sidecar, you can verify client connection statistics using the steps below.

Log in to the debug sidecar:

In this example, Service Proxy TMM is in the spk-ingress Project:

oc exec -it deploy/f5-tmm -c debug -n spk-ingress --bash

Obtain the DNS virtual server connection statistics:

tmctl -d blade virtual_server_stat -s name,clientside.tot_conns

In the example below, egress-dns-ipv4 counts DNS requests, egress-ipv4-nat46 counts new client translation mappings in dSSM, and egress-ipv4 counts connections to outside resources.

name clientside.tot_conns ----------------- -------------------- egress-ipv6-nat64 0 egress-ipv4-nat46 3 egress-dns-ipv4 9 egress-ipv4 7

If you experience DNS/NAT46 connectivity issues, refer to the Troubleshooting DNS/NAT46 guide.

Manual DNS46 entry¶

The following steps create a new DNS/NAT46 DB entry, mapping internal IPv4 NAT address 10.1.1.1 to remote IPv6 host 2002::10:1:1:1, and require a running Debug Sidecar container (the default behavior).

Important: Manual entries must only use IP addresses that have been reserved with the maxReservedStaticIps parameter. See Reserving DNS46 IPs above.

Important: Manual entries must only use IP addresses that have been reserved with the maxReservedStaticIps parameter. See Reserving DNS46 IPs above.

Obtain the dSSM Sentinel Service CLUSTER-IP and service PORT(S):

In this example, the dSSM Sentinel Service is in the spk-utilities Project:

oc get svc f5-dssm-sentinel -n spk-utilities

In this example, the dSSM Sentinel Service CLUSTER-IP is 172.30.205.250 and the PORT(S) is 26379:

NAME TYPE CLUSTER-IP PORT(S) f5-dssm-sentinel ClusterIP 172.30.205.250 26379/TCP

Connect to the TMM debug sidecar:

In this example, the debug sidecar is in the spk-ingress Project:

oc exec -it deploy/f5-tmm -c debug -n spk-ingress -- bash

Add the DNS46 record to the dSSM DB:

In this example, the DB entry maps IPv4 address 10.1.1.1 to IPv6 address 2002::10:1:1:1.

mrfdb -ipport=172.30.205.250:26379 -serverName=server -type=dns46 -set -key=10.1.1.1 -val=2002::10:1:1:1

View the new DNS46 record entry:

mrfdb -ipport=172.30.205.250:26379 -serverName=server -type=dns46 -display=all

t_dns462002::10:1:1:1 10.1.1.1 t_dns4610.1.1.1 2002::10:1:1:1

To delete the DNS46 entry from the dSSM DB:

mrfdb -ipport=172.30.205.250:26379 -serverName=server -type=dns46 -delete -key=10.1.1.1 -val=2002::10:1:1:1

Test connectivity to the remote host:

curl http://10.1.1.1 8080

Upgrading DNS46 entries¶

Starting in SPK version 1.4.10, DNS46 requires two entries; one entry for DNS 6-to-4 lookups, and one entry for NAT 4-to-6 lookups. The mrfdb tool, introduced in version 1.4.10, creates these entries by default, however, manual DNS46 records created in earlier versions contain only a single entry. The following steps upgrade DNS46 manual entries created in versions 1.4.9 and earlier, and require the Debug Sidecar.

Obtain the dSSM Sentinel Service CLUSTER-IP and service PORT(S):

In this example, the dSSM Sentinel Service is in the spk-utilities Project:

oc get svc f5-dssm-sentinel -n spk-utilities

In this example, the dSSM Sentinel Service CLUSTER-IP is 172.30.205.250 and the PORT(S) is 26379:

NAME TYPE CLUSTER-IP PORT(S) f5-dssm-sentinel ClusterIP 172.30.205.250 26379/TCP

Connect to the TMM debug sidecar:

In this example, the debug sidecar is in the spk-ingress Project:

oc exec -it deploy/f5-tmm -c debug -n spk-ingress -- bash

View the new DNS46 record entries:

mrfdb -ipport=172.30.205.250:26379 -serverName=server -type=dns46 -display=all

In this example, the version 1.4.9 and earlier records contain only a single entry:

t_dns4610.1.1.1 2002::10:1:1:1 t_dns4610.1.1.2 2002::10:1:1:2

Upgrade the DNS46 records in the dSSM DB:

mrfdb -ipport=172.30.205.250:26379 -serverName=server -type=dns46 -set -key=10.1.1.1 -val=2002::10:1:1:1

mrfdb -ipport=172.30.205.250:26379 -serverName=server -type=dns46 -set -key=10.1.1.2 -val=2002::10:1:1:2

View the upgraded DNS46 record entries:

mrfdb -ipport=172.30.205.250:26379 -serverName=server -type=dns46 -display=all

In this example, the version 1.4.10 and later records contain two entries:

t_dns462002::10:1:1:1 10.1.1.1 t_dns4610.1.1.1 2002::10:1:1:1 t_dns462002::10:1:1:2 10.1.1.2 t_dns4610.1.1.2 2002::10:1:1:2

Feedback¶

Provide feedback to improve this document by emailing spkdocs@f5.com.