Routing diameter traffic to pods using SPK¶

Another of the standard Service Proxy for Kubernetes (SPK) Custom Resource Definitions (CRD) is the f5-spk-ingressdiameters.k8s.f5net.com. This CRD configures the Service Proxy Traffic Management Microkernel (TMM) to direct traffic to diameter enabled applications running on one of more pods using familiar load balancing features such as round robin, ratio least connection member, ratio member and ratio session. Routes to these pods can be exposed using static routes or using BGP and along with BFD to support dynamic updates. Service Proxy TMM load balances ingress Diameter connections to the Pod Service Endpoints, and creates persistence records using the diameter SESSION-ID[0] Attribute-Value Pair (AVP) by default.

What is Diameter¶

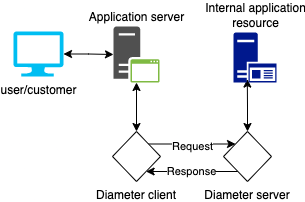

Diameter is a standard protocol that is used to exchange authentication, authorization and accounting (AAA). Diameter is the widely seen as the replacement for RADIUS providing improved security, reliability and flexibility as required for mobile data networks. Diameter supports enhanced policy control, dynamic rules, quality of service, bandwidth allocation and billing support. Diameter is built on a peer to peer architecture where each peer can be either the client and agent or the server in the exchange of data. The client creates the diameter message. The server is the node that receives the message. Agents largely proxy requests between clients and servers but can also make policy decisions about the messages received.

Diameter starts communication with a Capabilities Exchange Request (CER) which includes details about the node, diameter applications and security support. The Peer responds with Capabilities Exchange Answer (CEA) which includes the remote nodes details and supported applications. Once the connection is established the nodes maintain the connection using Device Watchdog Requests and Answers (DWR/DWA).

At this point the client and server are ready to exchange data supporting the clients requests for the application server or service.

Basic diameter traffic flow¶

Assumptions¶

You have access to an existing healthy OpenShift environment

You have deployed SPK version 1.5 or later

You have a diameter application deployed to the OpenShift environment

You have a diameter client that can initiate traffic

Use case scenario¶

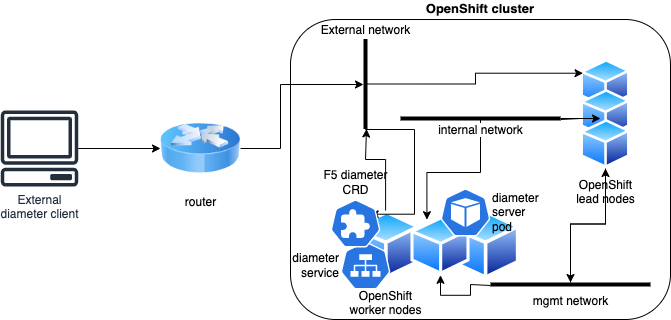

The application owner has deployed a diameter enabled application to the namespace (Project) that is being watched by the F5 SPK Controller.

The SPK administrator will expose the diameter enabled application using the F5 SPK Ingress Diameter Custom Resource.

The client external to the OpenShift cluster is ready to send diameter requests to the deployed SPK service.

A static route exists for the client send traffic to the SPK exposed diameter ingress.

Logical layout for use case¶

Environment details¶

In this example the diameter enabled application is called seagull and it is deployed to the namespace spk-test. The F5 SPK instance has been deployed to spk-diameter.

Note: Seagull is an Open Source multi-protocol traffic generator tool. One of the supported protocols is Diameter.

First confirm that your SPK instance is available and healthy. You can also check the logs for f5-ingress and f5-tmm.

oc get pods -n spk-diameter -o wide

oc logs spk-diameter-f5ingress-<unique value> -c spk-diameter-f5ingress

oc logs f5-tmm-<unique value> -c f5-tmm

Next check on the diameter application service and endpoints. In this example they are deployed to the spk-test project and the service name is seagull.

oc get svc -n spk-test

oc get endpoints -n spk-test seagull

Diameter CRD installation¶

Now we can configure and deploy the F5 SPK Ingress Diameter Custom Resource. The ApiVersion and kind are definitions of the object. However, the namespace must be set to your diameter application namespace. The metadata name is a custom value you define here for the object. The service must match the service deployed to support your diameter application and the port the service exposes. ExternalTCP address will be the address that the client will interact with for this service along with the port definition. Finally define the external host and realm.

apiVersion: "k8s.f5net.com/v1"

kind: F5SPKIngressDiameter

metadata:

namespace: spk-test

name: diameter-app

service:

name: seagull

port: 3868

spec:

externalTCP:

destinationAddress: "10.1.20.200"

destinationPort: 3868

internalTCP:

enabled: true

externalSession:

originHost: "external.example.com"

originRealm: "example.com"

internalSession:

persistenceTimeout: 60

Once you have deployed the f5 diameter ingress object you can display the values assigned.

oc get f5-spk-ingressdiameters.k8s.f5net.com -n spk-test

oc describe f5-spk-ingressdiameters.k8s.f5net.com -n spk-test diameter-app

Debugging¶

You can monitor the traffic flow in real time using the debug sidecar in the tmm pod. This pod comes with tcpdump which can monitor both the internal and external interfaces for diameter traffic.

Connect to the debug sidecar and display the available interfaces:

oc exec -it deploy/f5-tmm -c debug -n spk-diameter -- bash

tcpdump -D

Assuming your destination and service port are both 3868 you can monitor for traffic on these port and either the internal or external interfaces

tcpdump -i external -nn 3868

tcpdump -i internal -nn 3868

You can also use the tmctl tool to query Service Proxy TMM for application traffic processing statistics while connected to the debug sidecar.

tmctl -d blade virtual_server_stat -s name,clientside.tot_conns

Here you can also view pool member connection statistics.

tmctl -d blade pool_member_stat -s pool_name,serverside.tot_conns