Planning for VELOS Guide Source |

VELOS Diagnostics¶

This section will go through some of the diagnostic capabilities within the F5OS platform layer. Inside the TMOS tenant, the same BIG-IP diagnostic utilities that customers are used to are still available.

Qkviews¶

VELOS supports the ability to generate qkview reports to collect and bundle configuration and diagnostic data that can be sent to support or uploaded to iHealth. It is important to understand the VELOS architecture when generating qkview reports. Generating a qkview report from the system controller will capture OS data and container information related to the system controller software, while generating a qkview report inside a chassis partition will capture data and container information related to the partition layer. To capture tenant level information, you’ll need to run a qkview report inside the TMOS layer of the tenant.

K02521182: Generating diagnostic data for the VELOS system using the qkview utility

In general, you can use the qkview utility on VELOS systems to automatically collect configuration and diagnostic information from the system. The qkview utility provided in F5OS-C software captures diagnostic information from the VELOS system and associated containers.

Note: The qkview utility on the VELOS system does not capture diagnostic data from tenant BIG-IP systems. To generate diagnostic data for a tenant BIG-IP, log in to the tenant system and perform the relevant procedure in:

K12878: Generating diagnostic data using the qkview utility

The qkview utility on the VELOS system generates machine-readable JavaScript Object Notation (JSON) diagnostic data and combines the data into a single compressed Tape ARchive (TAR) format file. The single TAR file is comprised of embedded TAR files containing the diagnostic data of individual containers running on the system, as well as diagnostic data from the VELOS system. You can upload this file, called a qkview file, to iHealth, or give it to F5 Support to help them troubleshoot any issues.

Note: F5 Support requires a qkview file in all cases in which remote access to the product is not available.

System Controller qkview:

- Use this to investigate problems relating to the controllers themselves, or the controller platform services.

- Collects information for active controller

- Collects host information, including logs

- Collects information from each controller platform service container

- Collects info for standby controller

- Collects host information, including logs

- Collects information from each controller platform service container

Chassis Partition qkview:

- Use this to investigate problems relating to a chassis partition. For example, a problem with one of the partition services, or one of the blades in that partition

- Collects information for chassis partition services, on the controller for which the partition is active

- Collects information for each partition service container

- Collects information for partition services, on the controller for which the partition is in standby mode

- Collects information for each partition service container

- Collects information from each blade in the partition

- Collects blade host information, including logs

- Collects information for each partition service container

Qkview Creation and Upload via webUI¶

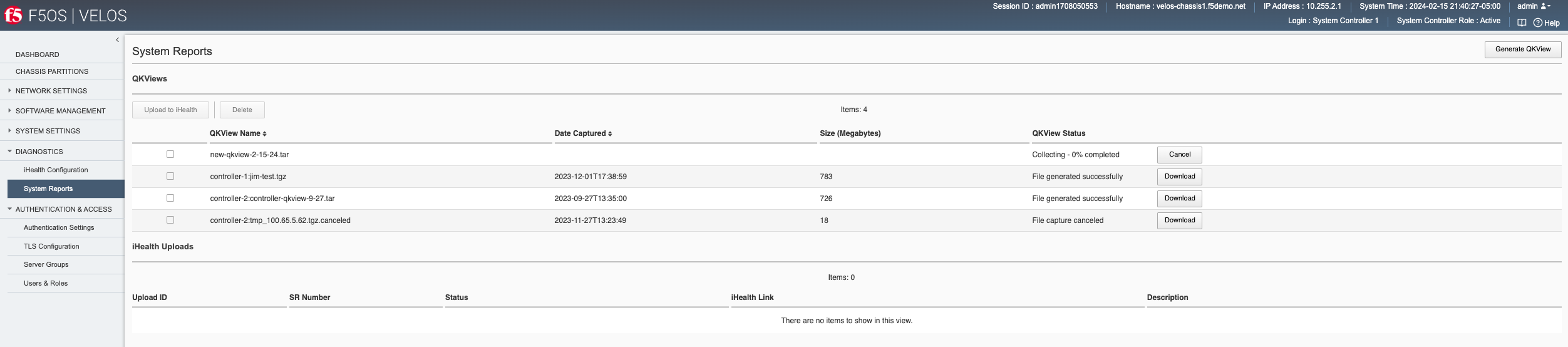

In both the system controller and the chassis partition a qkview can be generated from the Diagnostics > System Reports page. Here, you can also optionally download the qkview file (added in F5OS-C 1.7.0) or upload them directly to iHealth provided your system is allowed to access the Internet.

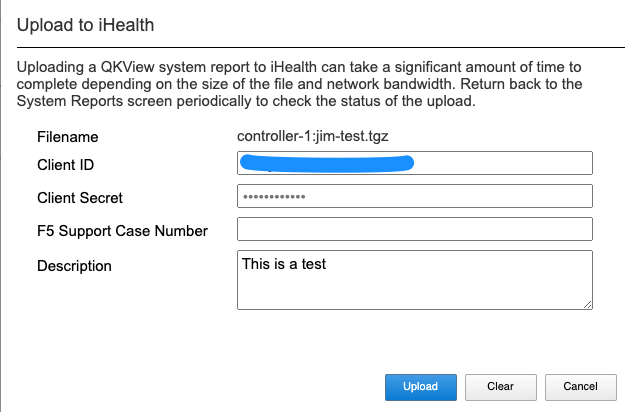

To generate a qkview report, click the Generate QKview button in the upper right-hand corner. It will take some time for the qkview to be generated. Once the qkview is generated, you can click the checkbox next to it, and then select Upload to iHealth. Your iHealth credentials will automatically fill in if you entered them previously using the Diagnostics -> iHealth Configuration page. Note, that the iHelath service recently went through authentication enhancements, and some older version of F5OS-C may not authenticate properly to the iHealth service. You should upgrade to F5OS-C 1.6.x or later to get the new authentication support that is compatible with the iHealth service.

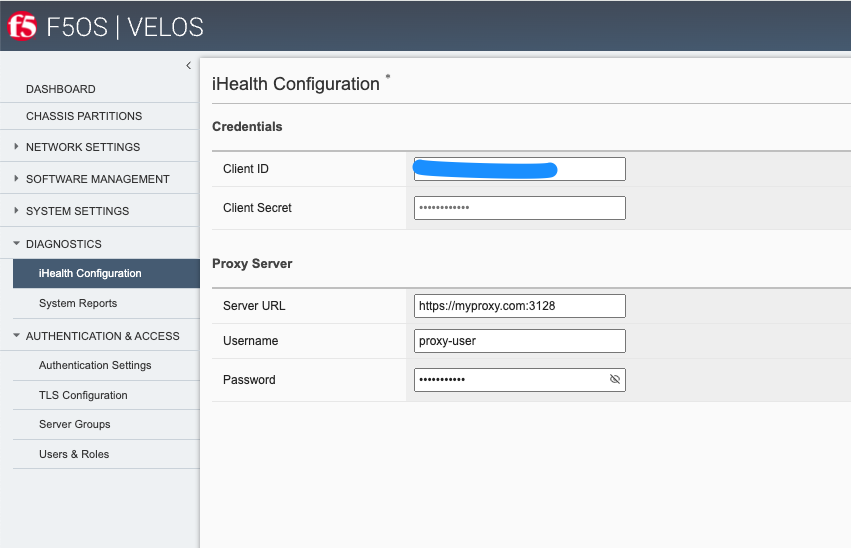

You can configure your iHealth Client ID and Client Secret to authenticate with the new iHealth authentication services using the Diagnostics -> iHealth Configuration page. You may also optionally configure a Proxy Server for iHealth access if your system requires external traffic to be inspected by a proxy server.

Once you have your Client ID and Client Secret configured you can then upload qkviews directly to iHealth. Your credentials will be automatically added to the upload request as seen below. You may also add an optional F5 Support Case Number and Description.

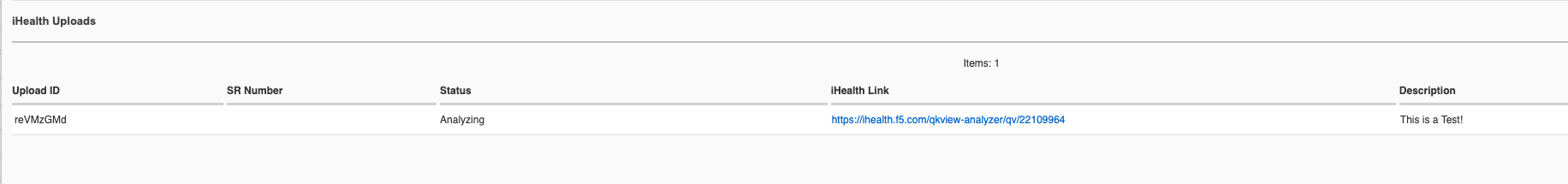

You’ll see the status of the upload as it progresses:

Qkview Creation and Upload via CLI¶

You can configure your iHealth Client ID and Client Secret to authenticate with the new iHealth authentication services via the CLI. Enter config mode, and then use the system diagnostics ihealth config command to configure a clientid and clientsecret.

syscon-1-active(config)# system diagnostics ihealth config clientid XXXXXXXXXXXXXXXXXXXX clientsecret

(<AES encrypted string>): **********************************************************************************

syscon-2-active(config)# commit

Commit complete.

syscon-1-active(config)# do show system diagnostics ihealth

system diagnostics ihealth state server https://ihealth2-api.f5.com/qkview-analyzer/api/qkviews?visible_in_gui=True

system diagnostics ihealth state authserver https://identity.account.f5.com/oauth2/ausp95ykc80HOU7SQ357/v1/token

system diagnostics ihealth state clientid XXXXXXXXXXXXXXXXXXXX

SERVICE

UPLOAD REQUEST

ID NUMBER STATUS IHEALTH LINK DESCRIPTION DETAILS

------------------------------------------------------------------------------------------------------------------------------------------------------------

reVMzGMd COMPLETE https://ihealth.f5.com/qkview-analyzer/qv/22109964 This is a Test! QKView uploaded successfully with 100 percent completion

syscon-1-active(config)#

You may also optionally configure a Proxy Server for iHealth access if your system requires external traffic to be inspected by a proxy server.

syscon-1-active(config)# system diagnostics proxy config ?

Possible completions:

proxy-password password for web proxy server.

proxy-server Server for local web proxy server.

proxy-username username for web proxy server.

syscon-1-active(config)# system diagnostics proxy config

To generate a qkview from the CLI run the command system diagnostics qkview capture.

syscon-1-active# system diagnostics qkview capture

result Qkview file controller-2.qkview is being collected

return code 200

resultint 0

You can view the status of the capture using the command system diagnostics qkview status.

syscon-2-active# system diagnostics qkview status

result {"Busy":true,"Percent":59,"Status":"collecting","Message":"Collecting Data","Filename":"controller-2.qkview"}

resultint 0

syscon-2-active# system diagnostics qkview status

result {"Busy":false,"Percent":100,"Status":"complete","Message":"Completed collection.","Filename":"controller-2.qkview"}

resultint 0

You may also confirm the file has been created by using the file list command, or the system diagnostics qkview list command to see more details about the size and creation date of the file:

syscon-1-active# file list path diags/shared/qkview/

entries {

name jim-test.tgz

date Fri Dec 1 22:38:59 UTC 2023

size 783MB

}

syscon-1-active#

syscon-1-active# system diagnostics qkview list

result {"Qkviews":[{"Filename":"jim-test.tgz","Date":"2023-12-01T17:38:59.08874537-05:00","Size":820585633}]}

resultint 0

syscon-1-active#

Qkview Creation and Upload to iHealth via API¶

A qkview can be generated for the system controller or any chassis partition using the following API call. Note, the IP address endpoint will either be the system controller or the desired chassis partition IP address.

POST https://{{velos_velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/f5-system-diagnostics-qkview:diagnostics/f5-system-diagnostics-qkview:qkview/f5-system-diagnostics-qkview:capture

In the body of the API call enter the filename of the qkview to be saved.

{

"f5-system-diagnostics-qkview:filename": "qkview{{currentdate}}.tgz"

}

If the generation of a qkview is successful, you’ll receive confirmation like the output below.

{

"f5-system-diagnostics-qkview:output": {

"result": " Warning: Qkview may contain sensitive data such as secrets, passwords and core files. Handle with care. Please send this file to F5 support. \nQkview file my-qkview2022-04-12.tgz is being collected.\nreturn code 200\n ",

"resultint": 0

}

}

To check the status of the qkview collection you can use the following API command.

POST https://{{velos_velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/f5-system-diagnostics-qkview:diagnostics/f5-system-diagnostics-qkview:qkview/f5-system-diagnostics-qkview:status

The output of the command will show the percentage complete of the qkview.

{

"f5-system-diagnostics-qkview:output": {

"result": " {\"Busy\":true,\"Percent\":18,\"Status\":\"collecting\",\"Message\":\"Collecting Data\",\"Filename\":\"my-qkview2022-04-12.tgz\"}\n ",

"resultint": 0

}

}

Before uploading your qkview file to iHealth you must ensure you have setup the proper credentials on your VELOS system. the iHealth service has recently changed its authentication methods. You must now get your client ID and client secret from the myf5.com portal, and then store them on your VELOS system to do direct uploads of qkview files to iHealth. Below is an example setting up the client ID and client secret via the API.

PUT https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/f5-system-diagnostics-qkview:diagnostics/f5-system-diagnostics-ihealth:ihealth/f5-system-diagnostics-ihealth:config/f5-system-diagnostics-ihealth:clientid

In the body of the API call, add your client ID:

{

"f5-system-diagnostics-ihealth:clientid": "XXXXXXXXXXXXXXXXXXXXXXX"

}

To confirm the client ID has been set, send the folowing API call:

GET https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/f5-system-diagnostics-qkview:diagnostics/f5-system-diagnostics-ihealth:ihealth/f5-system-diagnostics-ihealth:config/f5-system-diagnostics-ihealth:clientid

You should see something like the output below.

{

"f5-system-diagnostics-ihealth:clientid": "XXXXXXXXXXXXXXXXXXXXXXXXXX"

}

Next, you’ll need to enter your client secret from myf5.com. Use the following API call to add your client secret.

PUT https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/f5-system-diagnostics-qkview:diagnostics/f5-system-diagnostics-ihealth:ihealth/f5-system-diagnostics-ihealth:config/f5-system-diagnostics-ihealth:clientsecret

In the body of the API call, enter the client secret as seen below:

{

"f5-system-diagnostics-ihealth:clientsecret": "XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX"

}

To view the current client secret enter the following API call.

GET https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/f5-system-diagnostics-qkview:diagnostics/f5-system-diagnostics-ihealth:ihealth/f5-system-diagnostics-ihealth:config/f5-system-diagnostics-ihealth:clientsecret

In the body of the API call, you’ll see the encrypted client secret as seen below.

{

"f5-system-diagnostics-ihealth:clientsecret": "XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX"

}

If your environment has a proxy server and does not allow direct access to the Internet, then you can optionally add in the proxy server configuration to the VELOS system so that uploads will utilize the environment’s proxy server when uploading qkviews to iHealth. Below is an example of adding a proxy server configuration via the API.

PUT https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/f5-system-diagnostics-qkview:diagnostics/f5-system-diagnostics-proxy:proxy/f5-system-diagnostics-proxy:config

In the body of the API call add the proxy server configuration as seen below.

{

"f5-system-diagnostics-proxy:config": {

"f5-system-diagnostics-proxy:proxy-username": "proxy-user",

"f5-system-diagnostics-proxy:proxy-password": "password",

"f5-system-diagnostics-proxy:proxy-server": "https://myproxy.com:3128"

}

}

To view the proxy server configuration, enter the following API call.

GET https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/f5-system-diagnostics-qkview:diagnostics/f5-system-diagnostics-proxy:proxy

You’ll see the configuration returned in the API response.

{

"f5-system-diagnostics-proxy:proxy": {

"state": {

"proxy-username": "proxy-user",

"proxy-server": "https://myproxy.com:3128"

},

"config": {

"proxy-username": "proxy-user",

"proxy-password": "$8$XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX",

"proxy-server": "https://myproxy.com:3128"

}

}

}

If you’d like to copy the qkview directly to iHealth once it is completed, use the following API command referencing the previously completed qkview file.

POST https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/f5-system-diagnostics-qkview:diagnostics/f5-system-diagnostics-ihealth:ihealth/f5-system-diagnostics-ihealth:upload

In the body of the API call add details with the filename, optional description, and SR number. The call below assumes you have previously stored the proper iHealth credentials.

{

"f5-system-diagnostics-ihealth:qkview-file": "qkview{{currentdate}}.tgz",

"f5-system-diagnostics-ihealth:description": "This is a test qkview",

"f5-system-diagnostics-ihealth:service-request-number": ""

}

The output will confirm the upload has begun.

{

"f5-system-diagnostics-ihealth:output": {

"message": "HTTP/1.1 202 Accepted\r\nLocation: /support/ihealth/status/a0PBQTGW\r\nDate: Tue, 12 Apr 2022 04:19:40 GMT\r\nContent-Length: 0\r\n\r\n",

"errorcode": false

}

}

Qkview Download to Client via API¶

You can download qkviews direct to a client machine using the F5OS API. First, list the contents of the path diags/shared/qkview to see the save qkview files:

POST https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/f5-utils-file-transfer:file/list

In the body of the API call, add the following path:

{

"f5-utils-file-transfer:path": "diags/shared/qkview"

}

The output should look similar to the output below.

{

"f5-utils-file-transfer:output": {

"entries": [

{

"name": "jim-test.tgz",

"date": "",

"size": "783MB"

},

{

"name": "new-qkview-2-15-24.tar",

"date": "",

"size": "674MB"

}

]

}

}

To download one of the qkview files to the local client machine, enter the following API call.

POST https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/f5-utils-file-transfer:file/f5-file-download:download-file/f5-file-download:start-download

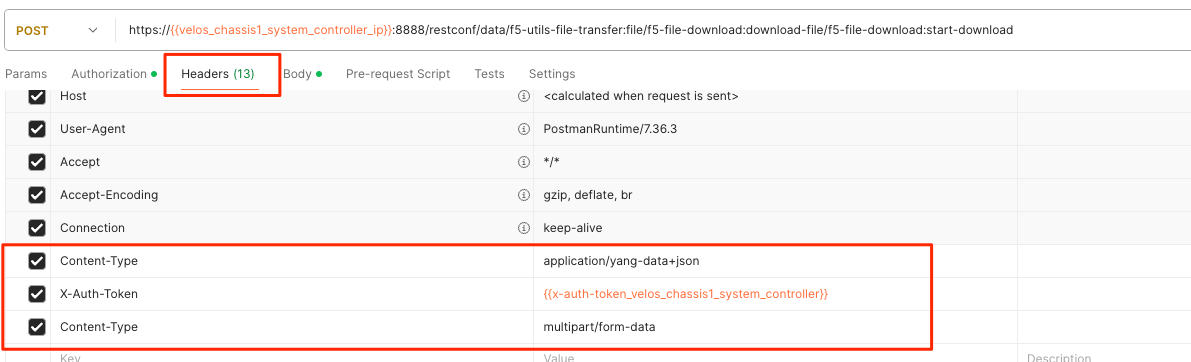

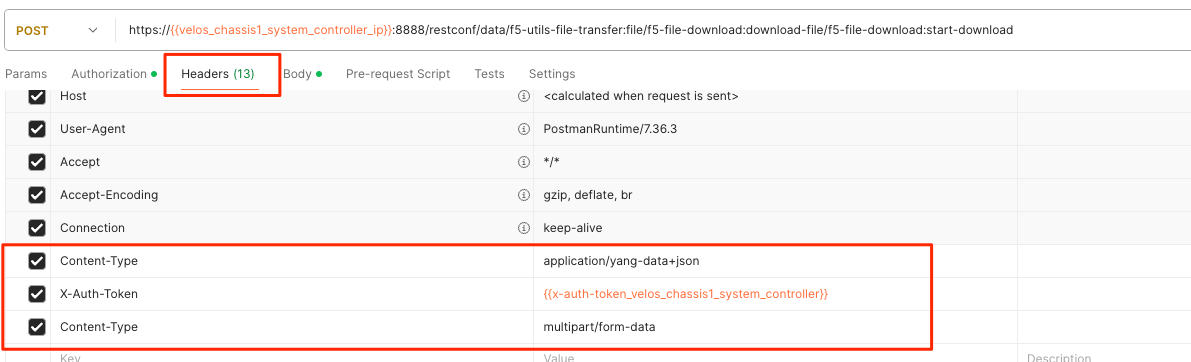

For the Headers section of the Postman request be sure to add the following headers:

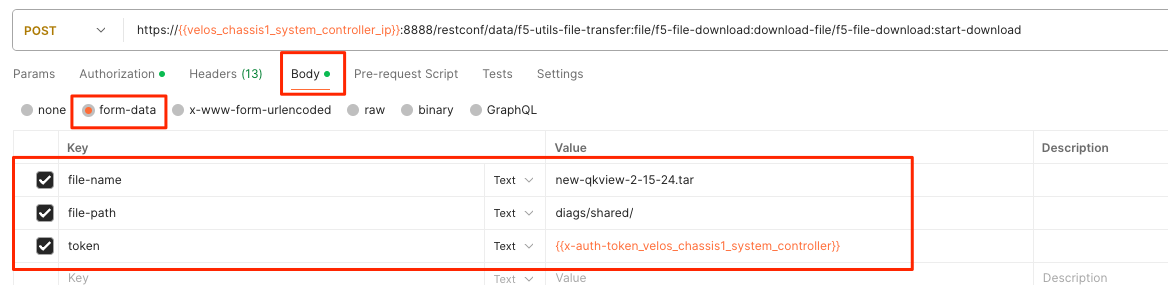

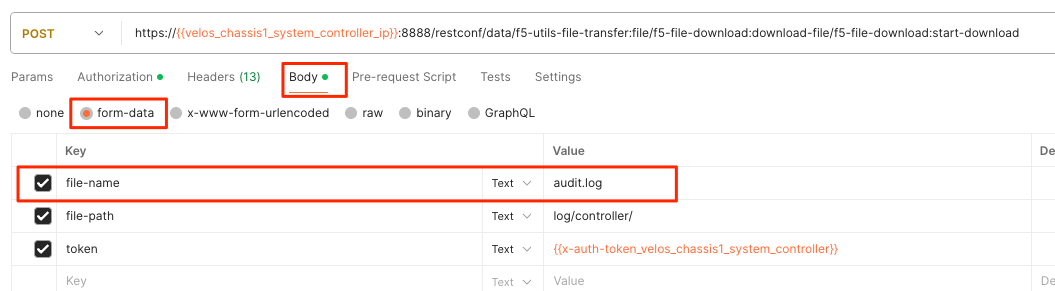

If you are using Postman, in the body of the API call select Body, then selct form-data. Then enter the file-name, path, and token as seen below.

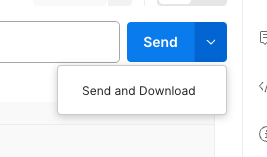

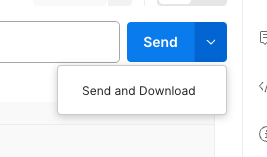

If you are using Postman, instead of clicking Send, click on the arrow next to Send, and then select Send and Download. You will then be prompted to save the file to your local file system.

Logging¶

F5OS has extensive logging and diagnostic capabilities, logs are stored locally on disk and can optionally be sent to a remote syslog server. In addition, there are multiple logging subsystems that can be tweaked to be more or less verbose via the Software Component Log Levels. Many functions inside the F5OS layer will log their important events to the default velos.log file that resides in the /log/controller/ path. This is the file that will also redirect all logs to a remote location (in addition to local disk) when Remote Log Servers are added. There are many other log files available local on the disk (some can also be redirected to be sent remotely) for various functions. As an example, there is an snmp.log which logs all SNMP requests and traps that the system sends and receives. Another example is the audit.log that captures audit related information such as “who has logged in?”, “What changes were made?”, “Who made the changes?”, and unsuccessful login attempts. This section will provide more details on the various logging subsystems, and how to configure them.

F5 publishes and maintains a log error catalog for F5OS-C (VELOS) here:

Viewing Logs¶

Viewing Logs from the CLI¶

Logs can be viewed via the F5OS CLI, or they can be downloaded for remote viewing via CLI or webUI. Logs can also be sent to an external SYSLOG location. When troubleshooting specific issues, the logging subsystems (sw-components) logging levels can be adjusted temporarily to provide more information.

Many functions inside the F5OS layer will log their events to the main velos.log file that resides in the /var/log_controller path in the underlying system controller shell. In the F5OS CLI the paths are simplified so that you don’t have to know the underlying directory structure. You can use the file list path command to see the files inside the log/controller directory:

syscon-2-active# file list path log/

Possible completions:

confd/ controller/ host/

syscon-2-active# file list path log/controller/

entries {

name afu-cookie

date Wed Aug 23 23:03:27 UTC 2023

size 33B

}

entries {

name audit.log

date Fri Aug 25 15:17:24 UTC 2023

size 6.9MB

}

entries {

name audit.log.1

date Wed Jul 12 16:58:15 UTC 2023

size 11MB

}

entries {

name audit.log.2.gz

date Tue Jun 6 16:43:01 UTC 2023

size 505KB

}

entries {

name audit.log.3.gz

date Thu May 4 22:39:02 UTC 2023

size 500KB

}

entries {

name audit.log.4.gz

date Mon Apr 24 16:22:54 UTC 2023

size 493KB

}

entries {

name audit.log.5.gz

date Tue Apr 4 15:03:32 UTC 2023

size 499KB

}

entries {

name cc-confd

date Thu Aug 24 00:34:34 UTC 2023

size 2.2MB

}

entries {

name cc-confd-hal

date Wed Aug 23 23:02:54 UTC 2023

size 0B

}

entries {

name cc-confd-health

date Fri Aug 25 15:16:58 UTC 2023

size 44MB

}

entries {

name cc-confd-health-diag-agent

date Wed Aug 23 23:02:57 UTC 2023

size 0B

}

entries {

name cc-confd-health.1

date Fri Apr 21 16:19:02 UTC 2023

size 101MB

}

entries {

name cc-confd-init

date Wed Aug 23 23:02:53 UTC 2023

size 388KB

}

entries {

name cc-upgrade.dbg

date Wed Aug 23 23:03:12 UTC 2023

size 277KB

}

entries {

name chassis-manager

date Fri Aug 25 15:17:25 UTC 2023

size 66MB

}

entries {

name chassis-manager.1

date Sun Jul 9 09:51:03 UTC 2023

size 101MB

}

entries {

name chassis-manager.2.gz

date Sat Jul 1 17:59:04 UTC 2023

size 2.9MB

}

entries {

name chassis-manager.3.gz

date Sat Jun 24 02:13:04 UTC 2023

size 2.9MB

}

entries {

name chassis-manager.4.gz

date Fri Jun 16 09:09:04 UTC 2023

size 2.9MB

}

entries {

name chassis-manager.5.gz

date Thu Jun 8 18:58:04 UTC 2023

size 3.0MB

}

entries {

name confd

date Thu Jul 14 17:50:32 UTC 2022

size 0B

}

entries {

name confd_go_standby

date Mon Jan 30 15:57:56 UTC 2023

size 207B

}

entries {

name confd_image_remove

date Mon May 8 15:41:49 UTC 2023

size 6.5KB

}

entries {

name config-object-manager

date Wed Aug 23 23:21:09 UTC 2023

size 23MB

}

entries {

name config-object-manager-hal

date Wed Aug 23 23:02:54 UTC 2023

size 0B

}

entries {

name events/

date Wed Aug 23 23:03:36 UTC 2023

size 4.0KB

}

entries {

name ha

date Thu Aug 24 01:01:25 UTC 2023

size 5.3MB

}

entries {

name ha-hal

date Wed Aug 23 23:02:54 UTC 2023

size 0B

}

entries {

name host-config

date Fri Aug 25 15:17:23 UTC 2023

size 76MB

}

entries {

name host-config-hal

date Wed Aug 23 23:02:57 UTC 2023

size 0B

}

entries {

name host-config.1

date Thu Jul 20 23:48:03 UTC 2023

size 101MB

}

entries {

name host-config.2.gz

date Thu Jun 22 03:52:03 UTC 2023

size 2.8MB

}

entries {

name host-config.3.gz

date Fri May 19 10:59:04 UTC 2023

size 2.8MB

}

entries {

name host-config.4.gz

date Sat Apr 8 17:36:04 UTC 2023

size 2.7MB

}

entries {

name host-config.5.gz

date Sun Mar 12 08:13:04 UTC 2023

size 2.7MB

}

entries {

name http_error_log

date Wed Aug 23 23:03:04 UTC 2023

size 17KB

}

entries {

name httpd/

date Fri May 5 04:24:03 UTC 2023

size 4.0KB

}

entries {

name image-server

date Wed Aug 23 23:27:41 UTC 2023

size 2.6MB

}

entries {

name image-server-dhcp

date Fri Aug 25 15:09:19 UTC 2023

size 14MB

}

entries {

name image-server-hal

date Wed Aug 23 23:03:04 UTC 2023

size 0B

}

entries {

name image-server-httpd

date Thu Jul 14 17:50:37 UTC 2022

size 0B

}

entries {

name image-server-monitor

date Wed Aug 23 23:03:04 UTC 2023

size 87KB

}

entries {

name lcd.log

date Wed Aug 23 23:08:44 UTC 2023

size 123KB

}

entries {

name logrotate.log

date Fri Aug 25 15:17:01 UTC 2023

size 40KB

}

entries {

name logrotate.log.1

date Fri Aug 25 15:11:02 UTC 2023

size 5.1MB

}

entries {

name logrotate.log.2.gz

date Fri Aug 25 00:23:01 UTC 2023

size 34KB

}

entries {

name partition-agent

date Wed Aug 23 23:27:39 UTC 2023

size 11MB

}

entries {

name partition-software-manager

date Fri Aug 25 15:17:25 UTC 2023

size 1.6MB

}

entries {

name partition-software-manager.1

date Fri Aug 25 13:56:04 UTC 2023

size 101MB

}

entries {

name partition-software-manager.2.gz

date Tue Aug 22 05:38:03 UTC 2023

size 3.6MB

}

entries {

name partition-software-manager.3.gz

date Sat Aug 19 01:36:04 UTC 2023

size 3.6MB

}

entries {

name partition-software-manager.4.gz

date Tue Aug 15 21:39:03 UTC 2023

size 3.6MB

}

entries {

name partition-software-manager.5.gz

date Sat Aug 12 17:42:04 UTC 2023

size 3.6MB

}

entries {

name partition-update

date Fri Aug 25 15:13:08 UTC 2023

size 96MB

}

entries {

name partition-update.1

date Mon Mar 27 04:12:04 UTC 2023

size 101MB

}

entries {

name partition-update.2.gz

date Mon Nov 21 21:24:01 UTC 2022

size 678KB

}

entries {

name pel_log

date Thu Aug 24 23:50:27 UTC 2023

size 48MB

}

entries {

name reprogram_chassis_network

date Wed Aug 23 23:03:40 UTC 2023

size 111KB

}

entries {

name rsyslogd_init.log

date Thu Aug 24 00:59:03 UTC 2023

size 75MB

}

entries {

name run/

date Wed Aug 23 23:02:54 UTC 2023

size 4.0KB

}

entries {

name sshd.terminal-server

date Wed Aug 23 23:03:46 UTC 2023

size 3.0KB

}

entries {

name switchd

date Wed Aug 23 23:31:22 UTC 2023

size 4.7MB

}

entries {

name switchd-hal

date Wed Aug 23 23:02:55 UTC 2023

size 0B

}

entries {

name switchd.1

date Wed Feb 1 23:25:01 UTC 2023

size 705MB

}

entries {

name switchd.2.gz

date Tue Jan 31 09:26:46 UTC 2023

size 79MB

}

entries {

name switchd.3.gz

date Mon Jan 30 22:08:45 UTC 2023

size 79MB

}

entries {

name switchd.4.gz

date Mon Jan 30 10:37:45 UTC 2023

size 80MB

}

entries {

name switchd.5.gz

date Sun Jan 29 23:21:46 UTC 2023

size 80MB

}

entries {

name system-update

date Wed Aug 23 22:53:48 UTC 2023

size 49KB

}

entries {

name terminal-server.default

date Wed Aug 23 23:03:00 UTC 2023

size 109KB

}

entries {

name tftp.log

date Wed Aug 23 23:13:05 UTC 2023

size 1.7KB

}

entries {

name vcc-confd-go-standby-hal.3451

date Mon Jan 30 15:57:56 UTC 2023

size 0B

}

entries {

name vcc-confd-go-standby-hal.579

date Tue Sep 13 17:19:41 UTC 2022

size 0B

}

entries {

name velos.log

date Fri Aug 25 15:14:07 UTC 2023

size 157MB

}

entries {

name velos.log.1

date Fri Apr 28 09:46:11 UTC 2023

size 513MB

}

entries {

name velos.log.2.gz

date Sat Mar 11 00:35:13 UTC 2023

size 23MB

}

entries {

name velos.log.3.gz

date Sun Sep 25 04:16:08 UTC 2022

size 19MB

}

entries {

name velos.log.4.gz

date Fri Jul 15 05:53:11 UTC 2022

size 16MB

}

entries {

name velos.log.5.gz

date Thu Jul 14 23:12:01 UTC 2022

size 16MB

}

syscon-2-active#

To view the contents of the velos.log file, use the command file show path /log/controller/velos.log:

syscon-1-active# file show log/controller/velos.log

2021-02-08T11:52:27-08:00 localhost.localdomain notice boot_marker: ---===[ BOOT-MARKER ]===---

2021-02-08T19:58:50.837735+00:00 controller-1 vcc-lacpd[0]: priority="Err" version=1.0 msgid=0x401000000000005 msg="Invalid Argument" function="fzmq_set_msg_queue_size" argument="handle NULL".

2021-02-08T19:58:50.837748+00:00 controller-1 user-manager[14]: priority="Notice" version=1.0 msgid=0x6801000000000001 msg="User Manager Starting".

2021-02-08T19:58:50.838837+00:00 controller-1 alert-service[7]: priority="Notice" version=1.0 msgid=0x2201000000000001 msg="Alert Service Starting..." version="3.4.7" date="Sun Oct 11 01:21:02 2020".

2021-02-08T19:58:50.838867+00:00 controller-1 alert-service[7]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

2021-02-08T19:58:50.838881+00:00 controller-1 /usr/bin/authd[7]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

2021-02-08T19:58:50.838934+00:00 controller-1 alert-service[7]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

There are options to manipulate the output of the file by adding | ? to see the options.

syscon-1-active# file show /log/controller/velos.log | ?

Possible completions:

append Append output text to a file

begin Begin with the line that matches

count Count the number of lines in the output

exclude Exclude lines that match

include Include lines that match

linnum Enumerate lines in the output

more Paginate output

nomore Suppress pagination

save Save output text to a file

until End with the line that matches

There are also other file options to tail the log file using file tail -f for live tail of the file or file tail -n <number of lines>.

syscon-1-active# file tail -f log/controller/velos.log

2021-02-23T16:42:41.251528+00:00 controller-1 rsyslog-configd[7]: priority="Info" version=1.0 msgid=0x1301000000000005 msg="Setting component log severity" name="partition-software-manager" severity=6.

2021-02-23T16:42:41.284819+00:00 controller-1 rsyslog-configd[7]: priority="Info" version=1.0 msgid=0x1301000000000005 msg="Setting component log severity" name="vcc-chassis-manager" severity=6.

2021-02-23T16:42:41.290347+00:00 controller-1 rsyslog-configd[7]: priority="Info" version=1.0 msgid=0x1301000000000005 msg="Setting component log severity" name="vcc-confd" severity=6.

2021-02-23T16:42:41.295275+00:00 controller-1 rsyslog-configd[7]: priority="Info" version=1.0 msgid=0x1301000000000005 msg="Setting component log severity" name="vcc-ha" severity=6.

2021-02-23T16:42:41.305051+00:00 controller-1 rsyslog-configd[7]: priority="Info" version=1.0 msgid=0x1301000000000005 msg="Setting component log severity" name="vcc-lacpd" severity=6.

2021-02-23T16:42:41.305662+00:00 controller-1 rsyslog-configd[7]: priority="Info" version=1.0 msgid=0x1301000000000005 msg="Setting component log severity" name="vcc-partition-agent" severity=6.

2021-02-23T16:42:46.960349+00:00 controller-1 partition-software-manager[9]: priority="Info" version=1.0 msgid=0x1101000000000034 msg="configuration updated; num_part:" num_partition=4.

2021-02-23T16:42:46.960395+00:00 controller-1 partition-software-manager[9]: priority="Info" version=1.0 msgid=0x1101000000000036 msg="configuration updated; num_image:" num_partition_iso_image=4.

2021-02-23T16:57:51.752978+00:00 controller-1 partition-software-manager[9]: priority="Err" version=1.0 msgid=0x1101000000000052 msg="unknown class_tag:" field_tag=1537040122.

2021-02-23T16:57:56+00:00 controller-2 partition-software-manager[8]: priority="Err" version=1.0 msgid=0x1101000000000052 msg="unknown class_tag:" field_tag=1537040122.

Below output is showing an example of tailing the last 20 lines of the vleos.log file.

syscon-1-active# file tail -n 20 log/controller/velos.log

2021-02-23T16:42:41.077215+00:00 controller-1 vcc-lacpd[7]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1614098561077203609 id="1614045762610008304:2" seq=207646 mtype="SEND_TYPE" src="lacpd CC2 sender" dest="addr:tcp://10.1.5.62:1053".

2021-02-23T16:42:41.077239+00:00 controller-1 vcc-lacpd[7]: priority="Debug" version=1.0 msgid=0x3301000000000052 msg="PDU:" direction="Transmitted" interface="1/1.3" length=124.

2021-02-23T16:42:41.077257+00:00 controller-1 vcc-lacpd[7]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1614098561077247405 id="1614045762609932334:1" seq=207648 mtype="SEND_TYPE" src="lacpd CC1 sender" dest="addr:tcp://10.1.5.61:1053".

2021-02-23T16:42:41.077280+00:00 controller-1 vcc-lacpd[7]: priority="Debug" version=1.0 msgid=0x3301000000000052 msg="PDU:" direction="Transmitted" interface="2/1.3" length=124.

2021-02-23T16:42:41.077301+00:00 controller-1 vcc-lacpd[7]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1614098561077291045 id="1614045762610008304:2" seq=207647 mtype="SEND_TYPE" src="lacpd CC2 sender" dest="addr:tcp://10.1.5.62:1053".

2021-02-23T16:42:41.077391+00:00 controller-1 vcc-lacpd[7]: priority="Debug" version=1.0 msgid=0x3301000000000052 msg="PDU:" direction="Transmitted" interface="1/mgmt0" length=124.

2021-02-23T16:42:41.077411+00:00 controller-1 vcc-lacpd[7]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1614098561077399963 id="1614045762609932334:1" seq=207649 mtype="SEND_TYPE" src="lacpd CC1 sender" dest="addr:tcp://10.1.5.61:1053".

2021-02-23T16:42:41.077437+00:00 controller-1 vcc-lacpd[7]: priority="Debug" version=1.0 msgid=0x3301000000000052 msg="PDU:" direction="Transmitted" interface="2/mgmt0" length=124.

2021-02-23T16:42:41.077477+00:00 controller-1 vcc-lacpd[7]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1614098561077445005 id="1614045762610008304:2" seq=207648 mtype="SEND_TYPE" src="lacpd CC2 sender" dest="addr:tcp://10.1.5.62:1053".

2021-02-23T16:42:41.077637+00:00 controller-1 vcc-lacpd[7]: priority="Debug" version=1.0 msgid=0x3301000000000050 msg="" debug_str="zmqMsgHandler.receivePdu called".

Within a chassis partition the path for the logging is different. You can use the same CLI commands in the chassis partition that are used in the system controllers by substituting the updated path for the log/velos.log file.

Production-1# file show log/velos.log

2021-02-22T23:46:23+00:00 10.1.18.51 controller-1(p2) partition-ha[1]: priority="Info" version=1.0 msgid=0x4602000000000004 msg="Active going Standby".

2021-02-22T23:46:23.381784+00:00 controller-2(p2) user-manager[223]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

2021-02-22T23:46:23+00:00 10.1.18.2 blade-2(p2) platform-mgr[12]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

2021-02-22T23:46:23+00:00 10.1.18.2 blade-2(p2) fpgamgr[12]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

2021-02-22T23:46:23+00:00 10.1.18.2 blade-2(p2) /usr/bin/authd[7]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

2021-02-22T23:46:23+00:00 10.1.18.2 blade-2(p2) l2-agent[12]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

2021-02-22T23:46:23+00:00 10.1.18.2 blade-2(p2) partition-ha[1]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

2021-02-22T23:46:23+00:00 10.1.18.2 blade-2(p2) /usr/sbin/fips-service[13]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

2021-02-22T23:46:23+00:00 10.1.18.1 blade-1(p2) platform-mgr[11]: priority="Info" version=1.0 msgid=0x6602000000000005 msg="DB is not ready".

The following command will tail the velos.log file on the chassis partition.

Production-1# file tail -f log/velos.log

2021-02-23T17:38:10+00:00 10.1.18.2 blade-2(p2) lacpd[1]: priority="Debug" version=1.0 msgid=0x3401000000000048 msg="" debug_str="velocityDatapathHandler.pollPdu() called".

2021-02-23T17:38:11+00:00 10.1.18.2 blade-2(p2) lacpd[1]: priority="Debug" version=1.0 msgid=0x3401000000000048 msg="" debug_str="velocityDatapathHandler.pollPdu() called".

2021-02-23T17:38:11+00:00 10.1.18.2 blade-2(p2) lacpd[1]: priority="Debug" version=1.0 msgid=0x3401000000000045 msg="PDU:" direction="Transmitted" interface="1/2.0" length=124.

2021-02-23T17:38:11+00:00 10.1.18.2 blade-2(p2) lacpd[1]: priority="Debug" version=1.0 msgid=0x3401000000000045 msg="PDU:" direction="Transmitted" interface="2/1.0" length=124.

2021-02-23T17:38:11+00:00 10.1.18.2 blade-2(p2) lacpd[1]: priority="Debug" version=1.0 msgid=0x3401000000000045 msg="PDU:" direction="Transmitted" interface="1/1.0" length=124.

Viewing Logs from the webUI¶

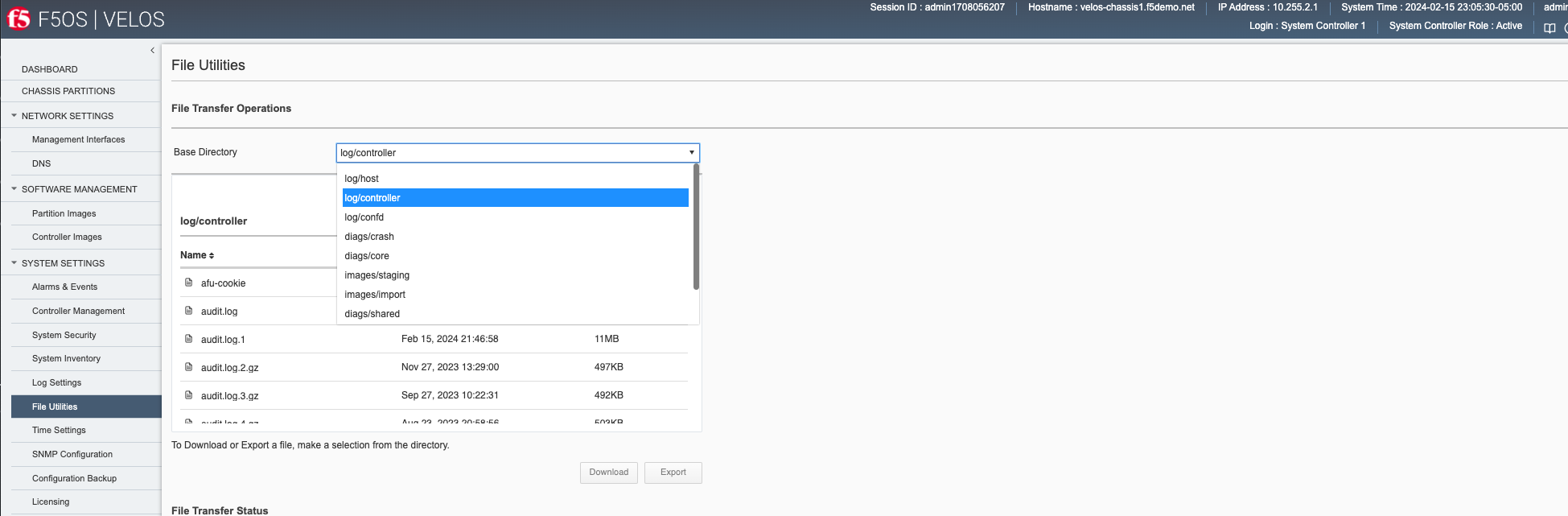

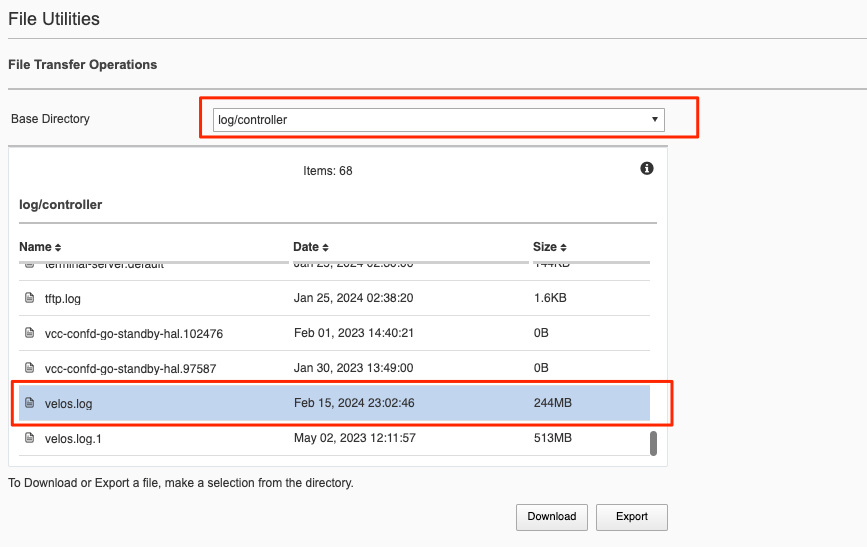

In the current release you cannot view the F5OS logs directly from the webUI, although you can download them from the webUI. To view the logs, you can use the CLI or API, or download the files and then view, or use a remote syslog server. To download log files from the webUI, go to the System Settings -> File Utilities page. Here there are various logs directories you can download files from. You have the option to Export files to a remote HTTPS server or Download the files directly to your client machine through the browser.

If you want to download the main velos.log, select the directory /log/controller.

Downloading Logs from the API¶

You can download various logs from the F5OS layer using the F5OS API. To list the current log files in the log/system/ directory use the following API call.

POST https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/f5-utils-file-transfer:file/list

In the body of the API call, add the virtual path you want to list.

{

"f5-utils-file-transfer:path": "log/controller/"

}

You should see similar output as displayed below.

{

"f5-utils-file-transfer:output": {

"entries": [

{

"name": "afu-cookie",

"date": "Thu Jan 25 07:30:19 UTC 2024",

"size": "33B"

},

{

"name": "audit.log",

"date": "Fri Feb 16 04:13:06 UTC 2024",

"size": "2.4MB"

},

{

"name": "audit.log.1",

"date": "Fri Feb 16 02:46:58 UTC 2024",

"size": "11MB"

},

{

"name": "audit.log.2.gz",

"date": "Mon Nov 27 18:29:00 UTC 2023",

"size": "497KB"

},

{

"name": "audit.log.3.gz",

"date": "Wed Sep 27 14:22:31 UTC 2023",

"size": "492KB"

},

{

"name": "audit.log.4.gz",

"date": "Thu Aug 24 00:58:56 UTC 2023",

"size": "503KB"

},

{

"name": "audit.log.5.gz",

"date": "Wed Jul 12 19:39:02 UTC 2023",

"size": "501KB"

},

{

"name": "cc-confd",

"date": "Thu Jan 25 07:31:14 UTC 2024",

"size": "1.5MB"

},

{

"name": "cc-confd-hal",

"date": "Thu Jan 25 07:29:49 UTC 2024",

"size": "0B"

},

{

"name": "cc-confd-health",

"date": "Fri Feb 16 04:13:05 UTC 2024",

"size": "36MB"

},

{

"name": "cc-confd-health-diag-agent",

"date": "Thu Jan 25 07:29:53 UTC 2024",

"size": "0B"

},

{

"name": "cc-confd-health.1",

"date": "Tue Nov 7 08:12:01 UTC 2023",

"size": "101MB"

},

{

"name": "cc-confd-init",

"date": "Thu Jan 25 07:29:49 UTC 2024",

"size": "363KB"

},

{

"name": "cc-upgrade.dbg",

"date": "Thu Jan 25 07:30:42 UTC 2024",

"size": "361KB"

},

{

"name": "chassis-manager",

"date": "Thu Jan 25 07:31:07 UTC 2024",

"size": "77MB"

},

{

"name": "chassis-manager.1",

"date": "Mon Feb 27 01:46:02 UTC 2023",

"size": "101MB"

},

{

"name": "confd",

"date": "Thu Jan 26 22:00:08 UTC 2023",

"size": "0B"

},

{

"name": "confd_go_standby",

"date": "Wed Feb 1 19:40:21 UTC 2023",

"size": "128B"

},

{

"name": "confd_image_remove",

"date": "Thu Feb 15 21:41:00 UTC 2024",

"size": "142KB"

},

{

"name": "config-object-manager",

"date": "Thu Feb 15 21:56:47 UTC 2024",

"size": "55MB"

},

{

"name": "config-object-manager-hal",

"date": "Thu Jan 25 07:29:49 UTC 2024",

"size": "0B"

},

{

"name": "events/",

"date": "Thu Jan 25 05:01:22 UTC 2024",

"size": "4.0KB"

},

{

"name": "ha",

"date": "Fri Feb 16 04:03:29 UTC 2024",

"size": "30MB"

},

{

"name": "ha-hal",

"date": "Thu Jan 25 07:29:50 UTC 2024",

"size": "0B"

},

{

"name": "ha.1",

"date": "Tue Mar 14 13:02:02 UTC 2023",

"size": "101MB"

},

{

"name": "host-config",

"date": "Fri Feb 16 04:13:07 UTC 2024",

"size": "17MB"

},

{

"name": "host-config-hal",

"date": "Thu Jan 25 07:29:52 UTC 2024",

"size": "0B"

},

{

"name": "host-config.1",

"date": "Sun Feb 11 21:13:04 UTC 2024",

"size": "101MB"

},

{

"name": "host-config.2.gz",

"date": "Wed Jan 17 08:22:04 UTC 2024",

"size": "2.7MB"

},

{

"name": "host-config.3.gz",

"date": "Tue Dec 19 23:58:04 UTC 2023",

"size": "2.7MB"

},

{

"name": "host-config.4.gz",

"date": "Fri Nov 24 12:24:04 UTC 2023",

"size": "2.7MB"

},

{

"name": "host-config.5.gz",

"date": "Mon Oct 30 02:38:03 UTC 2023",

"size": "2.7MB"

},

{

"name": "http_error_log",

"date": "Thu Jan 25 07:28:10 UTC 2024",

"size": "15KB"

},

{

"name": "httpd/",

"date": "Sun May 7 18:26:04 UTC 2023",

"size": "4.0KB"

},

{

"name": "image-server",

"date": "Fri Feb 16 04:04:16 UTC 2024",

"size": "2.6MB"

},

{

"name": "image-server-dhcp",

"date": "Fri Feb 16 04:04:16 UTC 2024",

"size": "15MB"

},

{

"name": "image-server-hal",

"date": "Thu Jan 25 07:28:10 UTC 2024",

"size": "0B"

},

{

"name": "image-server-httpd",

"date": "Thu Jan 26 22:00:12 UTC 2023",

"size": "0B"

},

{

"name": "image-server-monitor",

"date": "Fri Feb 16 04:04:16 UTC 2024",

"size": "181KB"

},

{

"name": "lcd.log",

"date": "Wed Jan 3 18:47:35 UTC 2024",

"size": "418KB"

},

{

"name": "logrotate.log",

"date": "Fri Feb 16 04:13:01 UTC 2024",

"size": "2.4MB"

},

{

"name": "logrotate.log.1",

"date": "Thu Feb 15 21:14:01 UTC 2024",

"size": "5.1MB"

},

{

"name": "logrotate.log.2.gz",

"date": "Thu Feb 15 06:26:01 UTC 2024",

"size": "34KB"

},

{

"name": "partition-agent",

"date": "Thu Feb 15 21:56:53 UTC 2024",

"size": "2.5MB"

},

{

"name": "partition-software-manager",

"date": "Fri Feb 16 04:13:08 UTC 2024",

"size": "12MB"

},

{

"name": "partition-software-manager.1",

"date": "Thu Feb 15 19:25:04 UTC 2024",

"size": "101MB"

},

{

"name": "partition-software-manager.2.gz",

"date": "Mon Feb 12 17:52:04 UTC 2024",

"size": "3.7MB"

},

{

"name": "partition-software-manager.3.gz",

"date": "Fri Feb 9 16:20:04 UTC 2024",

"size": "3.7MB"

},

{

"name": "partition-software-manager.4.gz",

"date": "Tue Feb 6 14:46:04 UTC 2024",

"size": "3.7MB"

},

{

"name": "partition-software-manager.5.gz",

"date": "Sat Feb 3 13:12:03 UTC 2024",

"size": "3.7MB"

},

{

"name": "partition-update",

"date": "Fri Feb 16 04:10:14 UTC 2024",

"size": "47MB"

},

{

"name": "partition-update.1",

"date": "Sat Dec 2 15:33:03 UTC 2023",

"size": "101MB"

},

{

"name": "partition-update.2.gz",

"date": "Sat Jul 1 17:53:02 UTC 2023",

"size": "673KB"

},

{

"name": "pel_log",

"date": "Thu Feb 15 22:06:11 UTC 2024",

"size": "5.3MB"

},

{

"name": "pel_log.1",

"date": "Sat Dec 16 03:15:02 UTC 2023",

"size": "101MB"

},

{

"name": "reprogram_chassis_network",

"date": "Thu Jan 25 07:30:47 UTC 2024",

"size": "59KB"

},

{

"name": "rsyslogd_init.log",

"date": "Thu Jan 25 07:30:55 UTC 2024",

"size": "114KB"

},

{

"name": "run/",

"date": "Thu Jan 25 07:29:49 UTC 2024",

"size": "4.0KB"

},

{

"name": "sshd.terminal-server",

"date": "Thu Jan 25 07:30:51 UTC 2024",

"size": "8.1KB"

},

{

"name": "switchd",

"date": "Tue Feb 13 20:10:53 UTC 2024",

"size": "11MB"

},

{

"name": "switchd-hal",

"date": "Thu Jan 25 07:29:52 UTC 2024",

"size": "0B"

},

{

"name": "system-update",

"date": "Wed Jan 3 18:55:21 UTC 2024",

"size": "35KB"

},

{

"name": "terminal-server.default",

"date": "Thu Jan 25 07:30:06 UTC 2024",

"size": "144KB"

},

{

"name": "tftp.log",

"date": "Thu Jan 25 07:38:20 UTC 2024",

"size": "1.6KB"

},

{

"name": "vcc-confd-go-standby-hal.102476",

"date": "Wed Feb 1 19:40:21 UTC 2023",

"size": "0B"

},

{

"name": "vcc-confd-go-standby-hal.97587",

"date": "Mon Jan 30 18:49:00 UTC 2023",

"size": "0B"

},

{

"name": "velos.log",

"date": "Fri Feb 16 04:13:02 UTC 2024",

"size": "244MB"

},

{

"name": "velos.log.1",

"date": "Tue May 2 16:11:57 UTC 2023",

"size": "513MB"

}

]

}

}

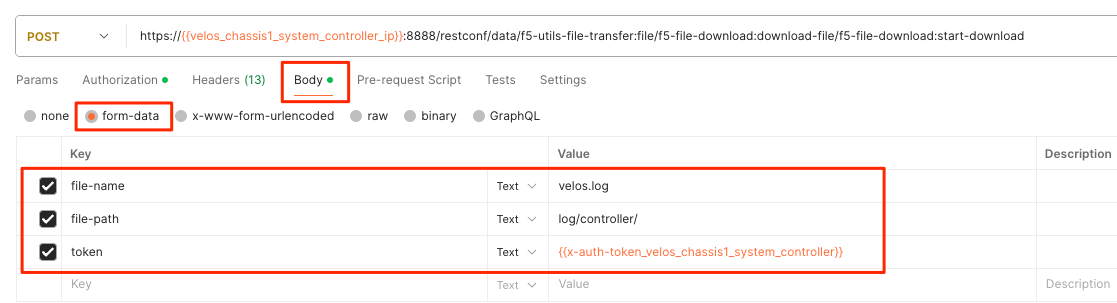

To download a specific log file, use the following API call.

POST https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/f5-utils-file-transfer:file/f5-file-download:download-file/f5-file-download:start-download

In the body of the API call select form-data, and then enter the key/value pairs as seen below. The example provided will download the velos.log file that resides in the **log/controller* directory.

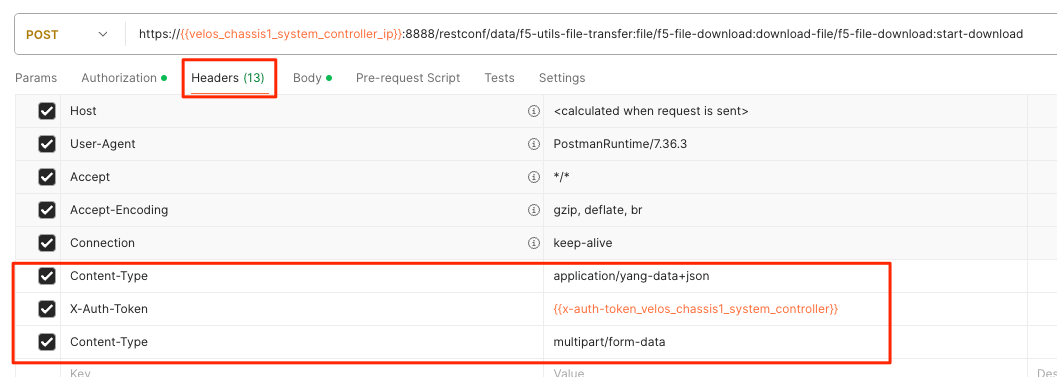

For the Headers secion of the Postman request be sure to add the following headers:

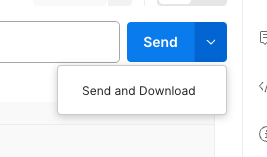

If you are using Postman, instead of clicking Send, click on the arrow next to Send, and then select Send and Download. You will then be prompted to save the file to your local file system.

If you wanted to download another log file in the same directory such as the audit.log file, simply change the file name in the form-data section as seen below.

Viewing Event Logs from the API¶

If the system currently has any active alarms, you can view them via the following API call:

GET https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/alarms

If there are no active alarms, then no output will be displayed.

You can display the F5OS Event Log via the following API call:

GET https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/f5-event-log:events

This will display all events (not just the active ones) from the beginning in the F5OS Event log:

{

"f5-event-log:events": {

"event": [

{

"log": "65793 psu-3 psu-fault EVENT NA \"Deasserted: PSU 3 present\" \"2023-10-09 15:17:25.590783542 UTC\""

},

{

"log": "65793 psu-3 psu-fault EVENT NA \"Deasserted: PSU 3 input OK\" \"2023-10-09 15:17:25.697411943 UTC\""

},

{

"log": "65793 psu-4 psu-fault EVENT NA \"Deasserted: PSU 4 present\" \"2023-10-09 15:17:25.865224822 UTC\""

},

{

"log": "65793 psu-4 psu-fault EVENT NA \"Deasserted: PSU 4 input OK\" \"2023-10-09 15:17:25.868507303 UTC\""

},

{

"log": "65793 psu-4 psu-fault EVENT NA \"Deasserted: PSU 4 output OK\" \"2023-10-09 15:17:25.871668123 UTC\""

},

{

"log": "66048 controller-1 arbitration-state EVENT NA \"Deasserted: peer arbitration health state\" \"2023-10-09 15:17:25.875765168 UTC\""

},

{

"log": "66048 controller-1 arbitration-state EVENT NA \"Deasserted: peer arbitration health state\" \"2023-10-09 15:17:25.879797733 UTC\""

},

{

"log": "66048 controller-1 arbitration-state EVENT NA \"Asserted: peer arbitration health state\" \"2023-10-09 15:23:59.285049747 UTC\""

},

{

"log": "65546 controller-2 thermal-fault EVENT NA \"CPU TCTL-Delta at -45.0 degC\" \"2023-10-09 15:20:05.466308600 UTC\""

},

{

"log": "66048 controller-2 arbitration-state EVENT NA \"Asserted: local arbitration health state\" \"2023-10-09 15:20:07.464237779 UTC\""

},

{

"log": "326781 controller-2 reboot EVENT NA \"reboot - controller-2.chassis.local F5OS-C controller version 1.6.1-18991\" \"2023-10-09 15:20:20.656236104 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"Deasserted: VQF Thermtrip\" \"2023-10-09 15:59:11.287952620 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"Deasserted: ATSE Thermtrip\" \"2023-10-09 15:59:11.291913806 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"Deasserted: VQF hot\" \"2023-10-09 15:59:13.284937388 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"Deasserted: ATSE hot\" \"2023-10-09 15:59:13.290565535 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"Deasserted: ATSE Thermtrip\" \"2023-10-09 15:59:13.294731490 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"Deasserted: VQF hot\" \"2023-10-09 15:59:13.305101666 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"Deasserted: ATSE hot\" \"2023-10-09 15:59:13.309989489 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"Deasserted: VQF Thermtrip\" \"2023-10-09 15:59:13.314486891 UTC\""

},

{

"log": "65536 blade-1 hardware-device-fault EVENT NA \"Deasserted: CPU machine check error\" \"2023-10-09 15:59:13.318842371 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"BWE at +40.3 degC\" \"2023-10-09 15:59:13.332817116 UTC\""

},

{

"log": "65536 blade-2 hardware-device-fault EVENT NA \"Deasserted: CPU machine check error\" \"2023-10-09 15:59:15.288433804 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"BWE at +36.5 degC\" \"2023-10-09 15:59:15.300134311 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"VQF1 at +45.6 degC\" \"2023-10-09 15:59:15.307162918 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"ATSE5 at +47.1 degC\" \"2023-10-09 15:59:17.285244897 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"ATSE1 at +46.3 degC\" \"2023-10-09 15:59:17.290907800 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"ATSE2 at +46.8 degC\" \"2023-10-09 15:59:17.295233766 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"ATSE3 at +46.9 degC\" \"2023-10-09 15:59:17.299505749 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"ATSE4 at +46.9 degC\" \"2023-10-09 15:59:17.303687937 UTC\""

},

{

"log": "65546 blade-1 thermal-fault EVENT NA \"VQF2 at +46.2 degC\" \"2023-10-09 15:59:17.307745308 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"VQF1 at +44.0 degC\" \"2023-10-09 15:59:17.314931399 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"VQF2 at +45.0 degC\" \"2023-10-09 15:59:17.319608487 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"ATSE1 at +43.8 degC\" \"2023-10-09 15:59:17.326570152 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"ATSE2 at +44.8 degC\" \"2023-10-09 15:59:17.330799112 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"ATSE3 at +44.5 degC\" \"2023-10-09 15:59:17.335193886 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"ATSE4 at +43.7 degC\" \"2023-10-09 15:59:17.347510071 UTC\""

},

{

"log": "65546 blade-2 thermal-fault EVENT NA \"ATSE5 at +43.9 degC\" \"2023-10-09 15:59:17.352626694 UTC\""

},

{

"log": "65536 blade-2 hardware-device-fault EVENT NA \"Deasserted: CPU machine check error\" \"2023-10-09 15:59:21.369228251 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"Deasserted: VQF hot\" \"2023-10-09 15:59:33.300107701 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"Deasserted: ATSE hot\" \"2023-10-09 15:59:33.306066434 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"Deasserted: VQF Thermtrip\" \"2023-10-09 15:59:33.365177931 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"Deasserted: ATSE Thermtrip\" \"2023-10-09 15:59:33.369903466 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"BWE at +35.7 degC\" \"2023-10-09 15:59:35.283246598 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"ATSE1 at +40.9 degC\" \"2023-10-09 15:59:39.288933173 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"ATSE2 at +42.2 degC\" \"2023-10-09 15:59:39.302401579 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"ATSE3 at +41.8 degC\" \"2023-10-09 15:59:39.343269968 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"VQF1 at +46.0 degC\" \"2023-10-09 15:59:39.347217437 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"VQF2 at +47.1 degC\" \"2023-10-09 15:59:39.351551030 UTC\""

},

{

"log": "65546 blade-3 thermal-fault EVENT NA \"ATSE4 at +41.4 degC\" \"2023-10-09 15:59:39.362056270 UTC\""

}

]

}

}

Logging Subsystems / Software Component Levels¶

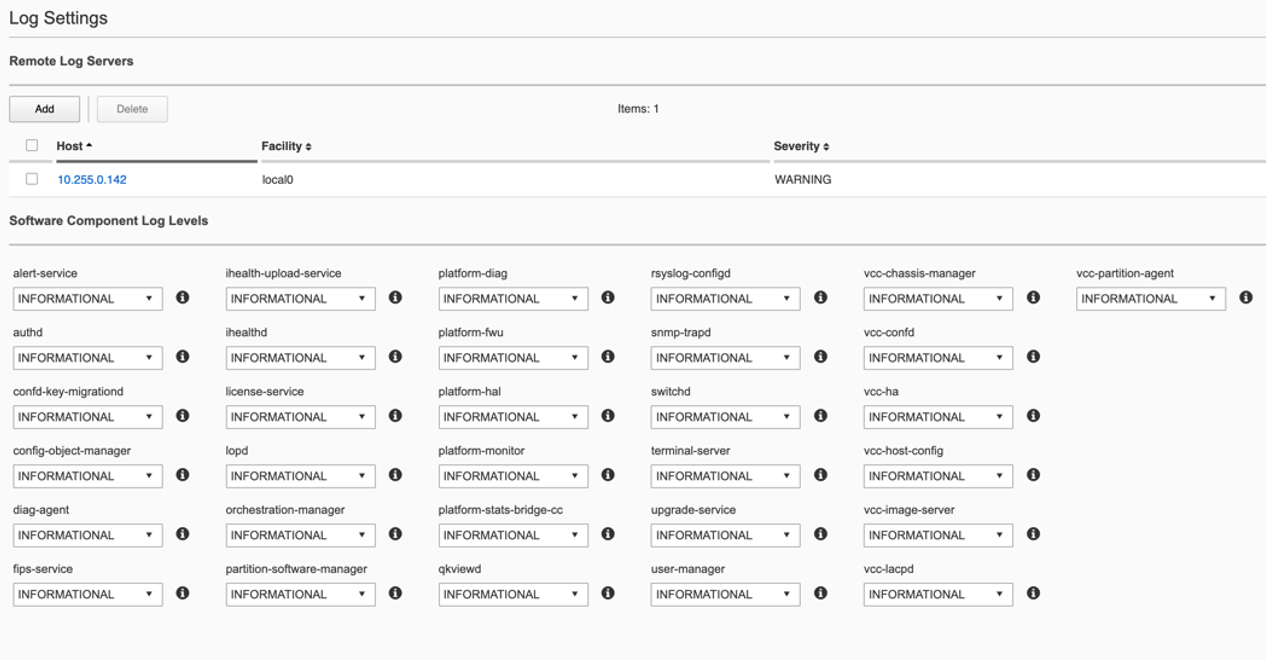

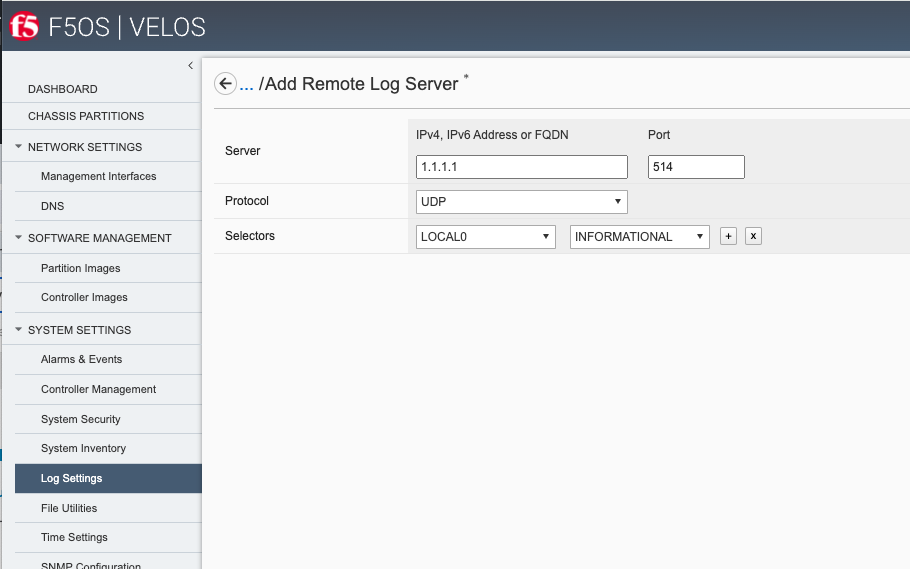

Currently in both the system controller and chassis partition webUIs logging levels can be configured for local logging, and remote logging servers can be added. The Software Component Log Levels can be changed to have additional logging information sent to the local log. The remote logging has its own Severity level, which will ultimately control the maximum level of all messages going to a remote log server regardless of the individual Component Log Levels. This will allow for more information to be logged locally for debug purposes, while keeping remote logging to a minimum. If you would like to have more verbosity going to the remote logging host, you can raise its severity to see additional messages.

Changing the Software Component Log Levels via CLI¶

If you would like to change any of the logging levels via the CLI you must be in config mode. Use the system logging sw-components sw-component <component name> config <logging severity> command. You must commit for this change to take effect. Be sure to set logging levels back to normal after troubleshooting has completed.

syscon-1-active(config)# system logging sw-components sw-component ?

Possible completions:

alert-service audit-service authd confd-key-migrationd config-object-manager diag-agent fips-service

firewall-manager ihealth-upload-service ihealthd license-service lopd orchestration-manager partition-software-manager

platform-diag platform-fwu platform-hal platform-monitor platform-stats platform-stats-bridge-cc qkviewd

rsyslog-configd snmp-service snmp-trapd sshd-crypto switchd system-service terminal-server

upgrade-service user-manager vcc-chassis-manager vcc-confd vcc-ha vcc-host-config vcc-image-server

vcc-lacpd vcc-partition-agent

syscon-1-active(config)#

Below is an example of setting the sw-subsystem authd to DEBUG, and then setting it back to INFORMATIONAL.

syscon-1-active(config)# system logging sw-components sw-component authd config severity ?

Description: sw-component logging severity level. Default is INFORMATIONAL.

Possible completions:

[INFORMATIONAL] ALERT CRITICAL DEBUG EMERGENCY ERROR INFORMATIONAL NOTICE WARNING

syscon-1-active(config)# system logging sw-components sw-component authd config severity DEBUG

syscon-1-active(config-sw-component-authd)# commit

Commit complete.

syscon-1-active(config-sw-component-authd)#

syscon-1-active(config-sw-component-authd)# exit

syscon-1-active(config)# system logging sw-components sw-component authd config severity INFORMATIONAL

syscon-1-active(config-sw-component-authd)# commit

Commit complete.

syscon-1-active(config-sw-component-authd)#

Adjusting Software Component Logging Levels via webUI¶

Currently F5OS webUI’s logging levels can be configured for local logging, and remote logging servers can be added as well. The Software Component Log Levels can be changed to have additional logging information sent to the local log. The remote logging has its own Severity level which will ultimately control the maximum level of all messages going to a remote log server regardless of the individual Component Log Levels. This will allow for more information to be logged locally for debug purposes, while keeping remote logging to a minimum. If you would like to have more verbose information going to the remote logging host, you can raise its severity to see additional messages.

Adjusting Software Component Logging Levels via API¶

You can display all the logging subsystem’s logging levels via the following API call:

GET https://{{velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/logging

Every subsystem will be displayed along with its current setting:

{

"openconfig-system:logging": {

"remote-servers": {

"remote-server": [

{

"host": "10.255.85.164",

"config": {

"host": "10.255.85.164",

"remote-port": 514,

"f5-openconfig-system-logging:proto": "udp"

},

"selectors": {

"selector": [

{

"facility": "f5-system-logging-types:LOCAL0",

"severity": "INFORMATIONAL",

"config": {

"facility": "f5-system-logging-types:LOCAL0",

"severity": "INFORMATIONAL"

}

},

{

"facility": "f5-system-logging-types:AUTHPRIV",

"severity": "INFORMATIONAL",

"config": {

"facility": "f5-system-logging-types:AUTHPRIV",

"severity": "INFORMATIONAL"

}

}

]

}

}

]

},

"f5-openconfig-system-logging:sw-components": {

"sw-component": [

{

"name": "alert-service",

"config": {

"name": "alert-service",

"description": "Alert service",

"severity": "INFORMATIONAL"

}

},

{

"name": "audit-service",

"config": {

"name": "audit-service",

"description": "Audit message handling service",

"severity": "INFORMATIONAL"

}

},

{

"name": "authd",

"config": {

"name": "authd",

"description": "Authentication configuration",

"severity": "INFORMATIONAL"

}

},

{

"name": "confd-key-migrationd",

"config": {

"name": "confd-key-migrationd",

"description": "Confd Primary Key Migration Service",

"severity": "INFORMATIONAL"

}

},

{

"name": "config-object-manager",

"config": {

"name": "config-object-manager",

"description": "Configuration object manager",

"severity": "INFORMATIONAL"

}

},

{

"name": "diag-agent",

"config": {

"name": "diag-agent",

"description": "Diag agent",

"severity": "INFORMATIONAL"

}

},

{

"name": "fips-service",

"config": {

"name": "fips-service",

"description": "FIPS service",

"severity": "INFORMATIONAL"

}

},

{

"name": "firewall-manager",

"config": {

"name": "firewall-manager",

"description": "Firewall Manager",

"severity": "INFORMATIONAL"

}

},

{

"name": "ihealth-upload-service",

"config": {

"name": "ihealth-upload-service",

"description": "Upload diagnostics data service",

"severity": "INFORMATIONAL"

}

},

{

"name": "ihealthd",

"config": {

"name": "ihealthd",

"description": "Communication proxy for ihealth-upload-service",

"severity": "INFORMATIONAL"

}

},

{

"name": "license-service",

"config": {

"name": "license-service",

"description": "License service",

"severity": "INFORMATIONAL"

}

},

{

"name": "lopd",

"config": {

"name": "lopd",

"description": "Communication proxy for the Lights Out Processor",

"severity": "INFORMATIONAL"

}

},

{

"name": "orchestration-manager",

"config": {

"name": "orchestration-manager",

"description": "Orchestration manager",

"severity": "INFORMATIONAL"

}

},

{

"name": "partition-software-manager",

"config": {

"name": "partition-software-manager",

"description": "Partition software manager",

"severity": "INFORMATIONAL"

}

},

{

"name": "platform-diag",

"config": {

"name": "platform-diag",

"description": "Platform diag service",

"severity": "INFORMATIONAL"

}

},

{

"name": "platform-fwu",

"config": {

"name": "platform-fwu",

"description": "Platform firmware upgrade",

"severity": "INFORMATIONAL"

}

},

{

"name": "platform-hal",

"config": {

"name": "platform-hal",

"description": "Platform hardware abstraction layer",

"severity": "INFORMATIONAL"

}

},

{

"name": "platform-monitor",

"config": {

"name": "platform-monitor",

"description": "Platform monitor",

"severity": "INFORMATIONAL"

}

},

{

"name": "platform-stats",

"config": {

"name": "platform-stats",

"description": "Collects platform statistics like cpu and memory utilization",

"severity": "INFORMATIONAL"

}

},

{

"name": "platform-stats-bridge-cc",

"config": {

"name": "platform-stats-bridge-cc",

"description": "Platform stats bridge",

"severity": "INFORMATIONAL"

}

},

{

"name": "qkviewd",

"config": {

"name": "qkviewd",

"description": "Diagnostic information",

"severity": "INFORMATIONAL"

}

},

{

"name": "rsyslog-configd",

"config": {

"name": "rsyslog-configd",

"description": "Logging configuration",

"severity": "INFORMATIONAL"

}

},

{

"name": "snmp-service",

"config": {

"name": "snmp-service",

"description": "SNMP service",

"severity": "INFORMATIONAL"

}

},

{

"name": "snmp-trapd",

"config": {

"name": "snmp-trapd",

"description": "SNMP trap",

"severity": "INFORMATIONAL"

}

},

{

"name": "sshd-crypto",

"config": {

"name": "sshd-crypto",

"description": "Enable Information logging for sshd-crypto",

"severity": "INFORMATIONAL"

}

},

{

"name": "switchd",

"config": {

"name": "switchd",

"description": "Switch daemon",

"severity": "INFORMATIONAL"

}

},

{

"name": "system-service",

"config": {

"name": "system-service",

"description": "System Configuration service",

"severity": "INFORMATIONAL"

}

},

{

"name": "terminal-server",

"config": {

"name": "terminal-server",

"description": "Terminal server",

"severity": "INFORMATIONAL"

}

},

{

"name": "upgrade-service",

"config": {

"name": "upgrade-service",

"description": "Software upgrade service",

"severity": "INFORMATIONAL"

}

},

{

"name": "user-manager",

"config": {

"name": "user-manager",

"description": "User manager",

"severity": "INFORMATIONAL"

}

},

{

"name": "vcc-chassis-manager",

"config": {

"name": "vcc-chassis-manager",

"description": "Chassis manager",

"severity": "INFORMATIONAL"

}

},

{

"name": "vcc-confd",

"config": {

"name": "vcc-confd",

"description": "System controller ConfD",

"severity": "INFORMATIONAL"

}

},

{

"name": "vcc-ha",

"config": {

"name": "vcc-ha",

"description": "High availability",

"severity": "INFORMATIONAL"

}

},

{

"name": "vcc-host-config",

"config": {

"name": "vcc-host-config",

"description": "Host configuration",

"severity": "INFORMATIONAL"

}

},

{

"name": "vcc-image-server",

"config": {

"name": "vcc-image-server",

"description": "Image server",

"severity": "INFORMATIONAL"

}

},

{

"name": "vcc-lacpd",

"config": {

"name": "vcc-lacpd",

"description": "Link aggregation control protocol",

"severity": "INFORMATIONAL"

}

},

{

"name": "vcc-partition-agent",

"config": {

"name": "vcc-partition-agent",

"description": "Partition agent",

"severity": "INFORMATIONAL"

}

}

]

},

"f5-openconfig-system-logging:host-logs": {

"config": {

"remote-forwarding": {

"enabled": true,

"f5-system-logging-controller:include-standby": [

null

]

},

"selectors": {

"selector": [

{

"facility": "openconfig-system-logging:AUTHPRIV",

"severity": "DEBUG"

}

]

},

"files": {

"file": [

{

"name": "audit/audit.log"

}

]

}

}

}

}

}

If you need to change the logging level to troubleshoot an issue, you can change the logging level via the APIs. Below is an example of changing the logging level for the l2-agent subsystem to DEBUG.

PATCH https://{{velos_velos_chassis1_system_controller_ip}}:8888/restconf/data/openconfig-system:system/logging

In the body of the API call, enter the sw-component you want to change, and the severity level you’d like to set.

{

"openconfig-system:logging": {

"f5-openconfig-system-logging:sw-components": {

"sw-component": {

"name": "audit-service",

"config": {

"name": "audit-service",

"description": "Audit message handling service",

"severity": "DEBUG"

}

}

}

}

}

When you are finished troubleshooting, you can set the logging level back to default (INFORMATIONAL).

{

"openconfig-system:logging": {

"f5-openconfig-system-logging:sw-components": {

"sw-component": {

"name": "audit-service",

"config": {

"name": "audit-service",

"description": "Audit message handling service",

"severity": "INFORMATIONAL"

}

}

}

}

}

Logging Software Component Descriptions¶

Below is a brief description of what each sw-component is responsible for, and some example logging messages when DEBUG is enabled. Note that when DEBUG level is set these messages are not intended for customers, they are for F5 developers and support personnel.

alert-service - The Alert Service runs on both System Controllers and also each blade. “Alarm” is the user-facing term for alerts. Applications can send an AlertNotification or ThresholdNotification messages to their local alert service. The blades and the standby controller forward all alert messages to the alert service running on the active controller. It aggregates all alerts and publishes them to ConfD.

api-svc-gateway - API service gateway is designed to share information between the F5OS layer and the F5OS Tenant layer.

2023-03-02T17:13:54.026196-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x5801000000000005 msg="" text="send_zmq_request: type FPGAMGR_MSG len 17#012".

2023-03-02T17:13:54.026481-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x401000000000022 msg="Receive Message" time=1677795234026424838 id="1677769706046478812:1" seq=25256 mtype="FPGAD_REPLY" src="addr:tcp://*:1049" dest="api-svc-gateway".

2023-03-02T17:13:54.026491-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x5801000000000005 msg="" text="fpgamgr_zmq_reply_handler: msgid 51, token 415206256#012".

2023-03-02T17:13:54.026494-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x5801000000000005 msg="" text="fpgamgr_reply_handler: found callback for token 415206256#012".

2023-03-02T17:13:55.027701-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1677795235027686383 id="1677769627848389263:1" seq=25260 mtype="SEND_TYPE" src="api-svc-gateway" dest="addr:tcp://localhost:1049".

2023-03-02T17:13:55.027717-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x5801000000000005 msg="" text="send_zmq_request: type FPGAMGR_MSG len 17#012".

2023-03-02T17:13:55.028007-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x401000000000022 msg="Receive Message" time=1677795235027943142 id="1677769706046478812:1" seq=25257 mtype="FPGAD_REPLY" src="addr:tcp://*:1049" dest="api-svc-gateway".

2023-03-02T17:13:55.028018-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x5801000000000005 msg="" text="fpgamgr_zmq_reply_handler: msgid 51, token 431991664#012".

2023-03-02T17:13:55.028023-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x5801000000000005 msg="" text="fpgamgr_reply_handler: found callback for token 431991664#012".

2023-03-02T17:13:55.543064-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x5801000000000005 msg="" text="Processing Tenant Event".

2023-03-02T17:13:55.544046-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x5802000000000001 msg="unknown tag in operation" CFG_ITER="tenant cfg iter" TAG=171991382 OP=4.

2023-03-02T17:13:55.544986-05:00 appliance-1 api-svc-gateway[11]: priority="Debug" version=1.0 msgid=0x5801000000000005 msg="" text="Done with tenant event processing".

appliance-orchestration-manager - Appliance orchestration manager

audit-service - Audit message handling service

Authd - The authentication service (authd) manages the configuration settings for AAA (Authentication, Authorization, Accounting).

2023-03-07T19:56:20.682344-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000022 msg="Receive Message" time=1678236980681562078 id="" seq=0 mtype="HealthMessage" src="" dest="tcp://127.0.0.1:3509".

2023-03-07T19:56:20.682393-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1678236980682383532 id="1678217274187775734:0" seq=1313 mtype="HealthMessage" src="addr:tcp://*:3509" dest="".

2023-03-07T19:56:20.682535-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000022 msg="Receive Message" time=1678236980682492047 id="" seq=0 mtype="HealthMessage" src="" dest="tcp://127.0.0.1:3509".

2023-03-07T19:56:20.682558-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1678236980682552774 id="1678217274187775734:0" seq=1314 mtype="HealthMessage" src="addr:tcp://*:3509" dest="".

2023-03-07T19:56:50.682214-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000022 msg="Receive Message" time=1678237010681917146 id="" seq=0 mtype="HealthMessage" src="" dest="tcp://127.0.0.1:3509".

2023-03-07T19:56:50.682253-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1678237010682245853 id="1678217274187775734:0" seq=1315 mtype="HealthMessage" src="addr:tcp://*:3509" dest="".

2023-03-07T19:56:50.682371-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000022 msg="Receive Message" time=1678237010682336184 id="" seq=0 mtype="HealthMessage" src="" dest="tcp://127.0.0.1:3509".

2023-03-07T19:56:50.682388-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1678237010682384529 id="1678217274187775734:0" seq=1316 mtype="HealthMessage" src="addr:tcp://*:3509" dest="".

2023-03-07T19:57:20.681724-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000022 msg="Receive Message" time=1678237040681443161 id="" seq=0 mtype="HealthMessage" src="" dest="tcp://127.0.0.1:3509".

2023-03-07T19:57:20.681764-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1678237040681756663 id="1678217274187775734:0" seq=1317 mtype="HealthMessage" src="addr:tcp://*:3509" dest="".

2023-03-07T19:57:20.681890-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000022 msg="Receive Message" time=1678237040681843884 id="" seq=0 mtype="HealthMessage" src="" dest="tcp://127.0.0.1:3509".

2023-03-07T19:57:20.681906-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1678237040681902126 id="1678217274187775734:0" seq=1318 mtype="HealthMessage" src="addr:tcp://*:3509" dest="".

2023-03-07T19:57:46.611093-05:00 appliance-1 authd[8]: priority="Debug" version=1.0 msgid=0x3901000000000115 msg="HTTPD configuration file unchanged from previous config." name="/etc/auth-config/tls/httpd-options.conf".

confd-key-migrationd - Confd Primary Key Migration Service

2023-03-07T19:59:26.636776-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000029 msg="Beginning step" step="SET_PEER_CONFD_ROMODE".

2023-03-07T19:59:26.636797-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000029 msg="Beginning step" step="BACKUP_SECURE_ELEMENTS".

2023-03-07T19:59:26.638451-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000018 msg="Specified maapi element does not exists in cdb" path="/system/logging/f5-logging:tls/f5-logging:key".

2023-03-07T19:59:26.638645-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000018 msg="Specified maapi element does not exists in cdb" path="/system/aaa/authentication/ldap/tls_key".

2023-03-07T19:59:26.638724-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000018 msg="Specified maapi element does not exists in cdb" path="/system/aaa/authentication/ldap/bindpw".

2023-03-07T19:59:26.638999-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000018 msg="Specified maapi element does not exists in cdb" path="/system/aaa/tls/config/passphrase".

2023-03-07T19:59:26.639063-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000018 msg="Specified maapi element does not exists in cdb" path="/system/aaa/tls/config/key".

2023-03-07T19:59:26.639253-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000018 msg="Specified maapi element does not exists in cdb" path="/system/logging/tls/key".

2023-03-07T19:59:26.639477-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000031 msg="debug:" msg="elem: bigip-tenant1".

2023-03-07T19:59:26.639694-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000018 msg="Specified maapi element does not exists in cdb" path="/tenants/tenant{bigip-tenant1}/state/unit-key".

2023-03-07T19:59:26.639753-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000031 msg="debug:" msg="elem: bigip-tenant1".

2023-03-07T19:59:26.640329-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000018 msg="Specified maapi element does not exists in cdb" path="/system/diagnostics/proxy/config/proxy-password".

2023-03-07T19:59:26.640730-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000029 msg="Beginning step" step="UPDATE_KEY".

2023-03-07T19:59:26.662258-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1678237166662247137 id="1678237166662231913:12" seq=0 mtype="ActionRequest" src="libhalsvc" dest="addr:tcp://localhost:1046".

2023-03-07T19:59:26.663030-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x401000000000022 msg="Receive Message" time=1678237166662975272 id="" seq=0 mtype="ActionResponse" src="" dest="libhalsvc".

2023-03-07T19:59:26.663289-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x401000000000024 msg="Send Message" time=1678237166663282866 id="1678237166663275196:13" seq=0 mtype="ActionRequest" src="libhalsvc" dest="addr:tcp://localhost:1046".

2023-03-07T19:59:26.675999-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x401000000000022 msg="Receive Message" time=1678237166675937671 id="" seq=0 mtype="ActionResponse" src="" dest="libhalsvc".

2023-03-07T19:59:26.846487-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000029 msg="Beginning step" step="RELOAD_CONFD_CONFIG".

2023-03-07T19:59:26.932454-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000029 msg="Beginning step" step="RESTORE_SECURE_ELEMENTS".

2023-03-07T19:59:26.933166-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000031 msg="debug:" msg="Restoring secure elem path: /tenants/platform-self-signed-cert/self-signed-key".

2023-03-07T19:59:26.933886-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000031 msg="debug:" msg="Restoring secure elem path: /tenants/tenant{bigip-tenant1}/config/unit-key".

2023-03-07T19:59:26.934306-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000031 msg="debug:" msg="Restoring secure elem path: /system/diagnostics/ihealth/config/password".

2023-03-07T19:59:26.934638-05:00 appliance-1 confd-key-migrationd[8]: priority="Debug" version=1.0 msgid=0x1b01000000000031 msg="debug:" msg="Restoring secure elem path: /system/aaa/confd-restconf-token/secret".